Technical Overview of the Anthropic AI Espionage Attack for SOC Teams

The Anthropic AI espionage case proves attackers trust autonomous agents. To counter machine-speed threats, SOCs must adopt and trust AI to handle 90% of the defense workload.

The first publicly documented, large-scale AI-orchestrated cyber-espionage campaign is now out in the open. Anthropic disclosed that threat actors (assessed with high confidence as a Chinese state-sponsored group) misused Claude Code to run the bulk of an intrusion targeting roughly 30 global organizations across tech, finance, chemical manufacturing, and government.

This attack should serve as a wake-up call, not because of what it is, but because of what it enables. The attackers used written scripts and known vulnerabilities, with AI primarily acting as an orchestration and reconnaissance layer; a "script kiddy" rather than a fully autonomous hacker. This is just the start.

In the near future, the capabilities demonstrated here will rapidly accelerate. We can expect to see actual malware that writes itself, finds and exploits vulnerabilities on the fly, and evades defenses in smart, adaptive ways. This shift means that the assumptions guiding SOC teams are changing.

What Actually Happened: The Technical Anatomy

The most critical takeaway from this campaign is not the technology used, but the level of trust the attackers placed in the AI. By trusting the model to carry out complex, multi-stage operations without human intervention, they unlocked significant, scalable capabilities far beyond human tempo.

1. Attackers “Jailbroke” the Model

Claude’s safeguards weren’t broken with a single jailbreak prompt. The actors decomposed malicious tasks into small, plausible “red-team testing” requests. The model believed it was legitimately supporting a pentest workflow. This matters because it shows that attackers don’t need to “break” an LLM. They just need to redirect its context and trust it to complete the mission.

2. AI Performed the Operational Heavy Lifting

The attackers trusted Claude Code to execute the campaign in an agentic chain autonomously:

- Scanning for exposed surfaces

- Enumerating systems and sensitive databases

- Writing and iterating exploit code

- Harvesting credentials and moving laterally

- Packaging and exfiltrating data

Humans stepped in only at a few critical junctures, mainly to validate targets, approve next steps, or correct the agent when it hallucinated. The bulk of the execution was delegated, demonstrating the attackers’ trust in the AI’s consistency and thoroughness.

3. Scale and Tempo Were Beyond Human Patterns

The agent fired thousands of requests. Traditional SOC playbooks and anomaly models assume slower human-driven actions, distinct operator fingerprints, and pauses due to errors or tool switching. Agentic AI has none of those constraints. The campaign demonstrated a tempo and scale that is only possible when the human operator takes a massive step back and trusts the machine to work at machine speed.

4. Anthropic Detected It and Shut It Down

Anthropic’s logs flagged abnormal usage patterns, disabled accounts, alerted impacted organizations, worked with governments, and released a technical breakdown of how the AI was misused.

The Defender’s Mandate: Adopt and Trust Defensive AI

Attackers have already made the mental pivot, treating AI as a trusted, high-velocity force multiplier for offense. Defenders must meet this shift head-on. If you don't adopt defensive AI, you are falling behind adversaries who already have.

Defenders must further adopt AI and trust it to carry out workflows where it has a decisive advantage: consistency, thoroughness, speed, and scale.

1. Attack Velocity Requires Machine Speed Defense

When an agent can operate at 50–200x human tempo, your detection assumptions rot fast. SOC teams need to treat AI-driven intrusion patterns as high-frequency anomalies, not human-like sequences.

2. Trust AI for High-Volume, Deterministic Workflows

Existing detection pipelines tuned on human patterns will miss sub-second sequential operations, machine-generated payload variants, and coordinated micro-actions. Agentic workloads look more like automation platforms than human operators.

Defenders need to accept the uncomfortable reality that manual triage for these types of intrusions is pointless. You need systems that can sift through massive alert loads, isolate and contain suspicious agentic behavior as it unfolds.

This is where the defense’s trust must be applied. Only the genuinely complex cases should ever reach a human. The SOC must delegate and trust AI to handle triage, investigation, and response with machine-like consistency.

3. “AI vs. AI” is No Longer Theoretical

Attackers have already made the mental pivot: AI is a force multiplier for offense today. Defenders need to accept the same reality. And Anthropic said this out loud in their conclusion:

“We advise security teams to experiment with applying AI for defense in areas like SOC automation, threat detection, vulnerability assessment, and incident response.”

That’s the part most vendors avoid saying publicly, because it commits them to a position. If you don’t adopt defensive AI, you’re falling behind adversaries who already have.

Where SOC Teams Should Act Now

Build Detection for Agentic Behaviors

Start by strengthening detection around behaviors that simply don’t look human. Agentic intrusions move at a pace and rhythm that operators can’t match: rapid-fire request chains, automated tool-hopping, endless exploit-generation loops, and bursty enumeration that sweeps entire environments in seconds. Even lateral movement takes on a mechanical cadence with no hesitation.

These patterns stand out once you train your systems to look for them, but they won’t surface through traditional detection tuned for human adversaries.

Make AI a Core Strategy, Not an Experiment

Start thinking of adopting AI to fight specific offensive AI use cases, whilst keeping your human SOC on its routine.

Defenders have to meet this shift head-on and start using AI against the very tactics it enables. The volume and velocity of these intrusions make manual triage pointless.

You need systems that can sift through massive alert loads, isolate and contain suspicious agentic behavior as it unfolds, generate and evaluate countermeasures on the fly, and digest massive log streams without slowing down. Only the genuinely complex cases should ever reach a human. This isn’t aspirational thinking; attackers have already proven the model works.

Key Takeaway

For SOC teams, the takeaway is that defense has to evolve at the same pace as offense. That means treating AI as a core operational capability inside the SOC, not an experiment or a novelty.

The Defender’s AI Mandate: Trust AI to handle tasks where it excels: consistency, thoroughness, and scale.

The Defender’s AI Goal: Delegate volume and noise to defensive AI agents, freeing human analysts to focus only on genuinely complex, high-confidence threats that require strategic human judgment.

Legion Security will continue publishing analysis, defensive patterns, and applied research in this space. If you want a deeper dive into detection signatures or how to operationalize defensive AI safely, just say the word.

Abstract & Data Summary

We gathered and manually annotated a dataset of 196 hard triage decisions from real-world security investigations, covering a wide range of outcomes, including benign, malicious, and false positives. After cleaning the dataset by removing mock runs and cases with missing information or incorrect workflow execution, the remaining 163 examples were grouped into use case categories to form a high-quality cohort. We then evaluated LLMs on the dataset overall and per use-case category and found that Gemini 3 Pro performs best overall, though the best LLM varies by use case category.

Model performance by use case category:

If you’d like to understand our full research methodology, read on.

*Note: since this blog was authored, several new model families have been released. While the results have remained broadly stable, particularly among the best and worst performers, updated research may be required for a nuanced understanding of the performance differences amongst the rest.

Data Collection

The dataset was constructed from security investigations from eight US-based customers.The evaluation is conducted in a secure, federated way, without mixing customer data, only reporting summary statistics from each customer tenant.

To create a challenging evaluation, we over-weighted cases in which the analyst dis-agreed with the model - so the error rate is inflated here.

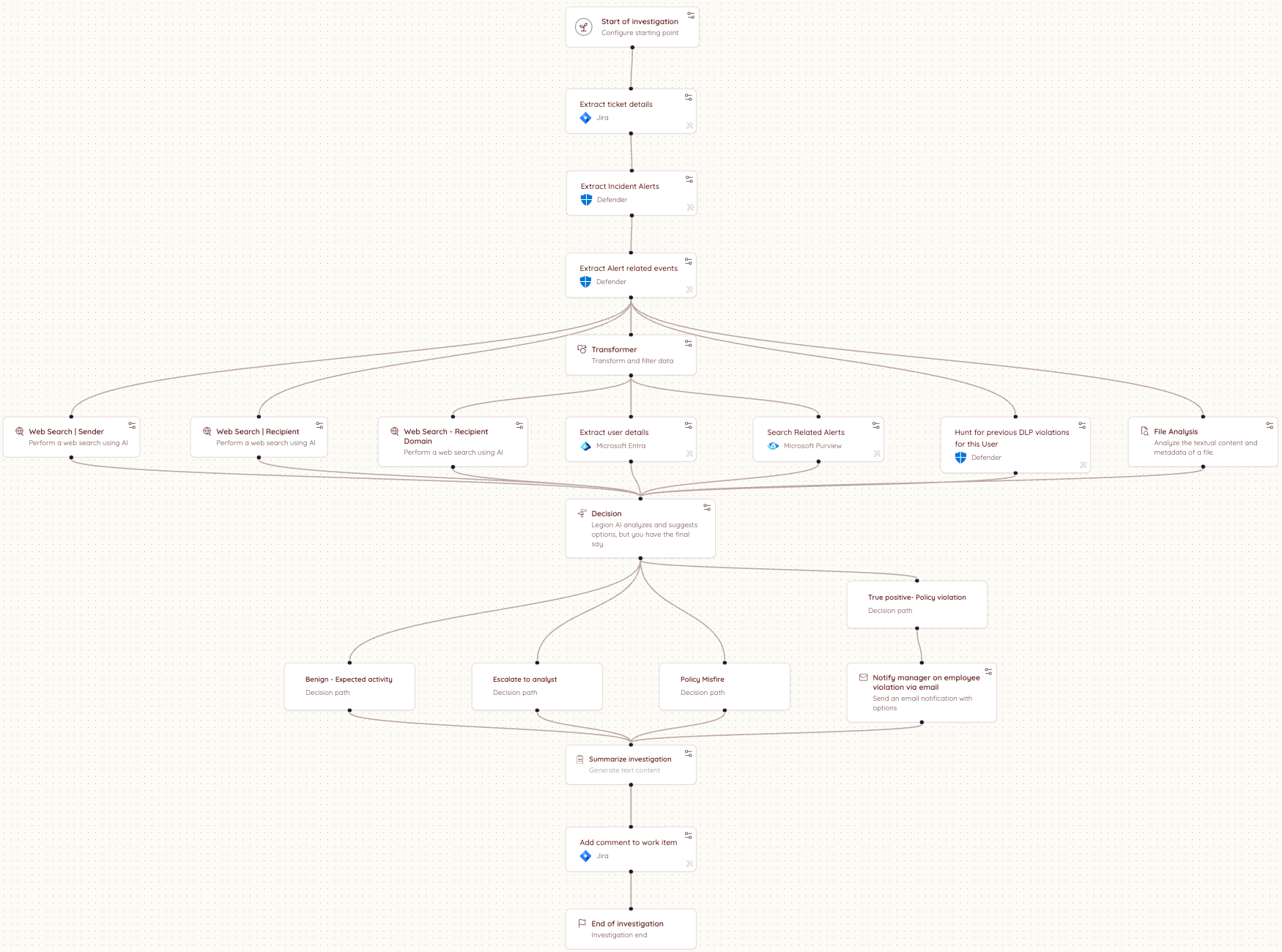

The investigations were conducted automatically according to predefined, customer-specific workflows, each of which contained at least one triage decision node. A triage decision node is a decision point within a workflow, where an LLM chooses a decision from among a list of provided decision options, given the information that was gathered in the workflow up until that point.

At each decision node, the LLM used in production selected a classification decision from a list of workflow-specific decision options and provided the reasoning for its decision, based on a summary of the steps completed until that point in the investigation.

For each investigation containing at least one decision node, we collected the following information from production session logs:

- A summary of the workflow steps up until the decision node, including tool name, step description, and step outputs

- Organization-specific knowledge, written by the customer and containing a title, description, and data

- The set of available decision options at the decision node

- The model's selected decision in production, as well as the reasoning and detailed reasoning for the decision

- The decision option selected by the customer

- Feedback text written by the customer for the decision

Here is an example workflow diagram:

Quality Control

An expert cybersecurity analyst annotated the 196 decision examples with reasoning tags to explain the production and customer decisions, and label whether disagreements are explained by an analyst-error, mistaken reasoning by the AI or missing data / steps in the workflow.

Examples tagged with "Workflow ran correctly but missing information" or "Workflow ran incorrectly" were removed from the dataset. Two additional examples with the use case titled "Workshop" were removed, as these were mock runs. For the remaining examples, the workflow ran correctly and was not missing information.

Triage Decision Distribution

By Label

Across the filtered dataset, the workflows contained 27 distinct normalized decision labels, which we grouped into the following buckets: False Positive, True Positive, Requires Review, and Other. The distribution of the labels is shown below:

The final evaluation dataset contains data from eight customers. The table below shows the number of annotated decision examples per customer and the tools used in each environment.

Use Case Distribution

We consolidated the use cases into 3 categories to consolidate our findings. Below is the map from the consolidated categories to the original use cases, as well as the distribution of the dataset over the consolidated categories.

Confusion Matrix

Below is a confusion matrix between the expert analyst annotations and the recommendations our system makes. We prompt the models to be careful and escalate when they are not sure.

Results

Over all use cases (including those without a use case name), Gemini 3 Pro had the highest performance at 74.8%, with GPT-4.1 and Opus 4.5 tied for second.

Phishing Results:

On the phishing use cases, Gemini 3 Pro performed the best, followed by Opus 4.5.

Account Takeover Results:

Sonnet 4 and GPT-4.1 were tied for best on the account takeover use cases.

Network Results:

Opus 4.5 and GPT-4.1 were tied for best on the network use cases.

Conclusion & Recommendation

We gathered and annotated 163 triage decisions from real-world security investigations. We characterized the use case distribution, and grouped the use cases according to common categories. We then benchmarked large language models across each use case category and the full dataset. We found that Gemini 3 Pro performs best overall. Per use case category, Gemini 3 Pro gives the best performance on phishing, Sonnet 4 and GPT-4.1 are tied for best on account takeover, and Opus 4.5 and GPT-4.1 are tied for best on network. Based on our results, we recommend that security teams test models for different scenarios to find the solution that works best for their use case, different models are good at different things and the only way to know which model works best for your use-cases it to run formal evaluation - or, you can trust us! Our research team in Legion is constantly evaluating new models and improvements to our triage pipelines.

We benchmarked leading LLMs on 163 real-world security triage decisions across phishing, account takeover, and network use cases. See which models performed best and why the answer depends on your use case

The security industry spent years debating when attackers would gain capabilities once out of reach — nation-state-level offensive tooling, zero-day discovery at scale, exploits built and iterated in minutes.

That gap was real. And it gave organizations the impression that the decision about which AI to bring into security operations, and how to do it right, could wait until the picture was clearer.

Mythos ended that assumption.

Not because of the model's size or strength, but because by the time Anthropic announced it, Mythos had already found thousands of high-severity vulnerabilities across every major operating system and browser in use today, without being told where to look. The decision not to release is the signal everyone was looking for.

That changes the implementation question. It was never acceptable to deploy AI badly in the SOC. Now it's not acceptable to deploy it slowly either. The organizations that will come out on top in the next 12 months are the ones that move fast and get it right, and most of the industry is about to discover that those aren't the same thing.

Level set: defenders have always been behind

The average breach lifecycle was already 258 days before AI-assisted attacks became the norm. This has nothing to do with the capabilities of analysts. Human-speed defense against machine-speed offense was always a losing equation.

Mythos-class models will almost certainly expand this breach lifecycle delta.

Most Implementations Are Getting It Wrong

87% of organizations experienced an AI-driven cyberattack in the past year. Security teams know they need AI. Most are already moving. But most implementations are failing for the same reason, and it is not the technology. It is a missing critical datapoint.

You. The context that shapes your business.

Most AI SOC tools treat every organization as interchangeable. They integrate with your SIEM, your EDR, your threat intel platforms, and assume that is enough. It is not. What determines whether AI actually works in your environment has nothing to do with the list of integrations. It is the organizational context that no integration can capture.

How is your organization structured? Where does data actually live versus where it is supposed to live? Who owns what, and how does that map to investigation and response when something goes wrong? How do escalation paths work in practice, not on paper? And critically, how do you enable the business without interrupting it?

The difference shows up clearly in practice. A heavily regulated enterprise running investigations across proprietary internal platforms looks nothing like a technology company. The organizational context that shapes every investigation, every escalation decision, and every response action is invisible to a system that only sees tool outputs.

Closing that gap is the foundational requirement that most implementations skip entirely.

Org Context Is Not a One-Time Setup

This is where most implementations fail, even when they start well.

Organizational context is not a configuration you complete on day one. Your organization is a living thing. Teams change. Tools get added. Processes evolve. New subsidiaries appear. Risk posture shifts with every acquisition, every regulatory update, every new product line the business launches.

An AI system that ingested your context six months ago and stopped learning is already drifting from your reality. It is making decisions based on an organization that no longer exists.

The right model is not a one-off ingestion. It is a continuous learning system that stays embedded in how your organization actually operates, tracks how investigations unfold, incorporates analyst feedback, and updates its understanding as your environment changes.

Not a snapshot.

A persistent model of your specific organization that evolves with it.

What Good Implementation Actually Looks Like

First, AI systems needs to understand how your organization actually operates. Not how it is documented, but how investigations really unfold, where data actually lives, and how decisions get made under pressure. The gap between what is written down and what actually happens is where most AI systems fail.

Second, that understanding cannot be static. Organizations change constantly. New teams, new tools, new processes, new risk priorities. Any system that relies on a snapshot of your environment will drift from reality and degrade over time. The AI working in your environment needs to keep learning it, not just learn it once.

Third, it needs to operate within that context, not around it. Producing technically correct outputs is not enough. The system needs to produce outcomes that are actionable within your organization as it exists today. That means working within your existing workflows, tools, and constraints without asking you to change how you operate to accommodate it.

That is the standard. Systems built around this model behave differently from the start. They do not ask organizations to adapt to them. They adapt to the organization. That distinction is where most implementations succeed or fail, and it is where the industry is slowly converging.

The Only Durable Path

The organizations getting AI right in the SOC aren't the ones with the longest integration lists or the biggest models. They're the ones that treated organizational context as the foundation rather than the afterthought, and built systems that keep learning their environment rather than freezing it in place on day one.

That is a harder implementation. It requires more from the vendor and more from the buyer. But Mythos made the timeline for getting there non-negotiable. The organizations that move fast on the wrong implementation will spend the next year rebuilding. The ones that move slowly on the right one will spend it exposed. The only durable path is moving quickly on the version that actually holds up. Systems built on continuous organizational context, deployed now rather than after the next incident, force the question.

The gap that used to buy time for deliberation is gone. What's left is the quality of the decision you make in its absence.

.png)

Mythos ended the debate on whether AI belongs in the SOC. The new question is how to deploy it right and why organizational context is the foundation most implementations skip.

Anthropic was right (and responsible) to release Mythos first to cybersecurity researchers and a select group of organizations through Project Glasswing. It is a genuinely remarkable model. And the security community should take it seriously. What is available to defenders today will be in the hands of attackers in a few months. That window is closing fast.

Mythos raises the ceiling on what AI can do in cybersecurity tasks. It discovers zero-day vulnerabilities in codebases that previous models could not find. It reverse-engineers complex systems. It constructs sophisticated, multi-path exploits at scale. The capabilities that were previously accessible only to well-funded nation-state actors can now be replicated by a far broader set of threat actors. No longer do you need teams of expert reverse engineers and months of reconnaissance.

The threat landscape is structurally shifting. We will be determined by our ability to shift our defense in kind. Quickly.

Where AI in defense needs to go first

The industry is converging, rightly, on vulnerability research and remediation as the priority. Scanning your own codebase with the same class of models that attackers are using is a clear first step. In many cases, defenders actually have an asymmetric advantage here, as we have better access to our own code than attackers do.

The harder problem is remediation. We already carry significant backlogs of unresolved, sometimes exploitable, vulnerabilities. Unlike an attacker who has nothing to lose, defenders cannot afford mistakes. Our systems are in production. Downtime has real costs. The asymmetry of attacker agility versus defender accountability is where the gap widens.

AI-assisted vulnerability remediation at scale is necessary. But it is not a solved problem, and any honest assessment of the landscape has to acknowledge that.

What this means for security operations

The idea of static detections designed to discover dynamic adversaries is fundamentally misguided. The future is better trip wires and an assume-breach mentality.

For SOC teams, the implications are direct. The scale and complexity of attacks is accelerating. We should expect a higher volume of sophisticated attacks that actively evade detection, that do not conform to known signatures or behavioral patterns, and that are designed from the ground up to stay invisible.

This breaks the model that most SOC programs are built on. The idea of maintaining a library of static detections to catch dynamic adversaries has always had limits. Those limits are now being exposed in real time.

What we need instead is the ability to detect a high volume of low-fidelity signals, such as anomalies in endpoint behavior, data access patterns, email activity, network flows, and identity. This requires teams to investigate each one as if it were the leading edge of a sophisticated breach. Not because every alert is a nation-state intrusion, but rather, we should expect that a higher percentage now may be.

The question is no longer whether to adopt AI in security operations. This is clearly needed. We cannot scale defenses solely on human labor.

The question is how to do it in a way that actually works inside the operational reality that security teams live in.

The real challenge is operational reality

Enterprises have legacy and custom tools, established processes, compliance and audit requirements, escalation paths, and oversight obligations that are not optional. AI cannot simply replace this infrastructure. It has to work within it.

You cannot properly scale your defenses without giving AI access to your organizational context, including your tools, your processes, your detection logic, and your escalation criteria. AI agents need to be able to investigate with the consistency and rigor of an incredible IR analyst, operate transparently, and support human oversight at the points where it matters.

This is precisely what we built Legion to do: meet organizations where they are. Our platform learns your existing tools, processes and context and makes them accessible to the latest frontier models (now Mythos, and every model in the future). From that we create structured, repeatable workflows where consistency is required or fully agentic investigations that require depth and judgment. Every action is auditable. Human-in-the-loop controls are configurable. And the system integrates across your entire stack.

My conclusion - Assume breach, investigate everything, build for the attacker that has already found the vulnerability you have not patched yet, and is using Mythos-level models to stay ahead of your detections.

In the wake of Mythos and Project Glasswing, security operations teams need AI that meets them where they are.