Resources

We benchmarked leading LLMs on 163 real-world security triage decisions across phishing, account takeover, and network use cases. See which models performed best and why the answer depends on your use case

Abstract & Data Summary

We gathered and manually annotated a dataset of 196 hard triage decisions from real-world security investigations, covering a wide range of outcomes, including benign, malicious, and false positives. After cleaning the dataset by removing mock runs and cases with missing information or incorrect workflow execution, the remaining 163 examples were grouped into use case categories to form a high-quality cohort. We then evaluated LLMs on the dataset overall and per use-case category and found that Gemini 3 Pro performs best overall, though the best LLM varies by use case category.

Model performance by use case category:

If you’d like to understand our full research methodology, read on.

*Note: since this blog was authored, several new model families have been released. While the results have remained broadly stable, particularly among the best and worst performers, updated research may be required for a nuanced understanding of the performance differences amongst the rest.

Data Collection

The dataset was constructed from security investigations from eight US-based customers.The evaluation is conducted in a secure, federated way, without mixing customer data, only reporting summary statistics from each customer tenant.

To create a challenging evaluation, we over-weighted cases in which the analyst dis-agreed with the model - so the error rate is inflated here.

The investigations were conducted automatically according to predefined, customer-specific workflows, each of which contained at least one triage decision node. A triage decision node is a decision point within a workflow, where an LLM chooses a decision from among a list of provided decision options, given the information that was gathered in the workflow up until that point.

At each decision node, the LLM used in production selected a classification decision from a list of workflow-specific decision options and provided the reasoning for its decision, based on a summary of the steps completed until that point in the investigation.

For each investigation containing at least one decision node, we collected the following information from production session logs:

- A summary of the workflow steps up until the decision node, including tool name, step description, and step outputs

- Organization-specific knowledge, written by the customer and containing a title, description, and data

- The set of available decision options at the decision node

- The model's selected decision in production, as well as the reasoning and detailed reasoning for the decision

- The decision option selected by the customer

- Feedback text written by the customer for the decision

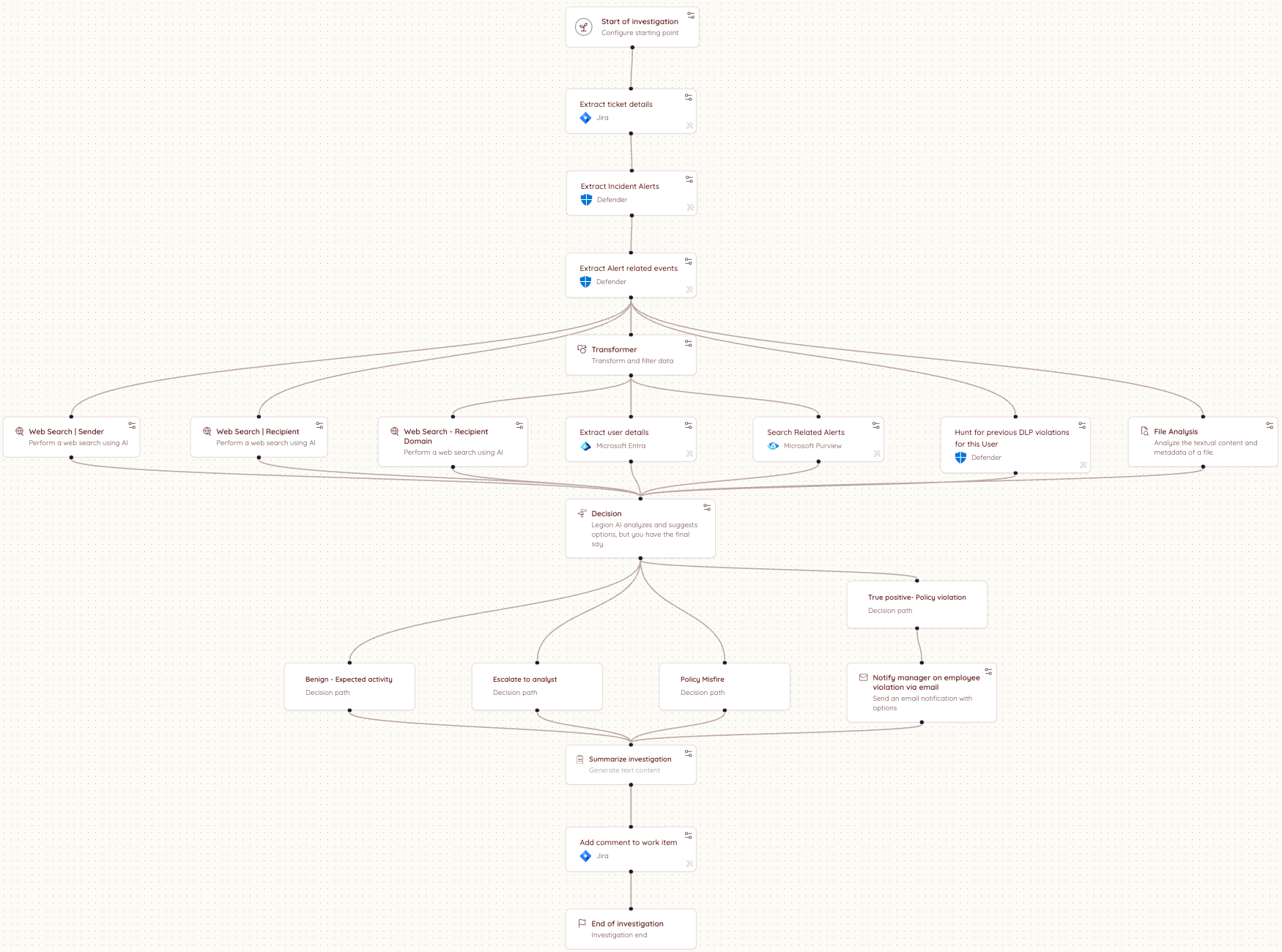

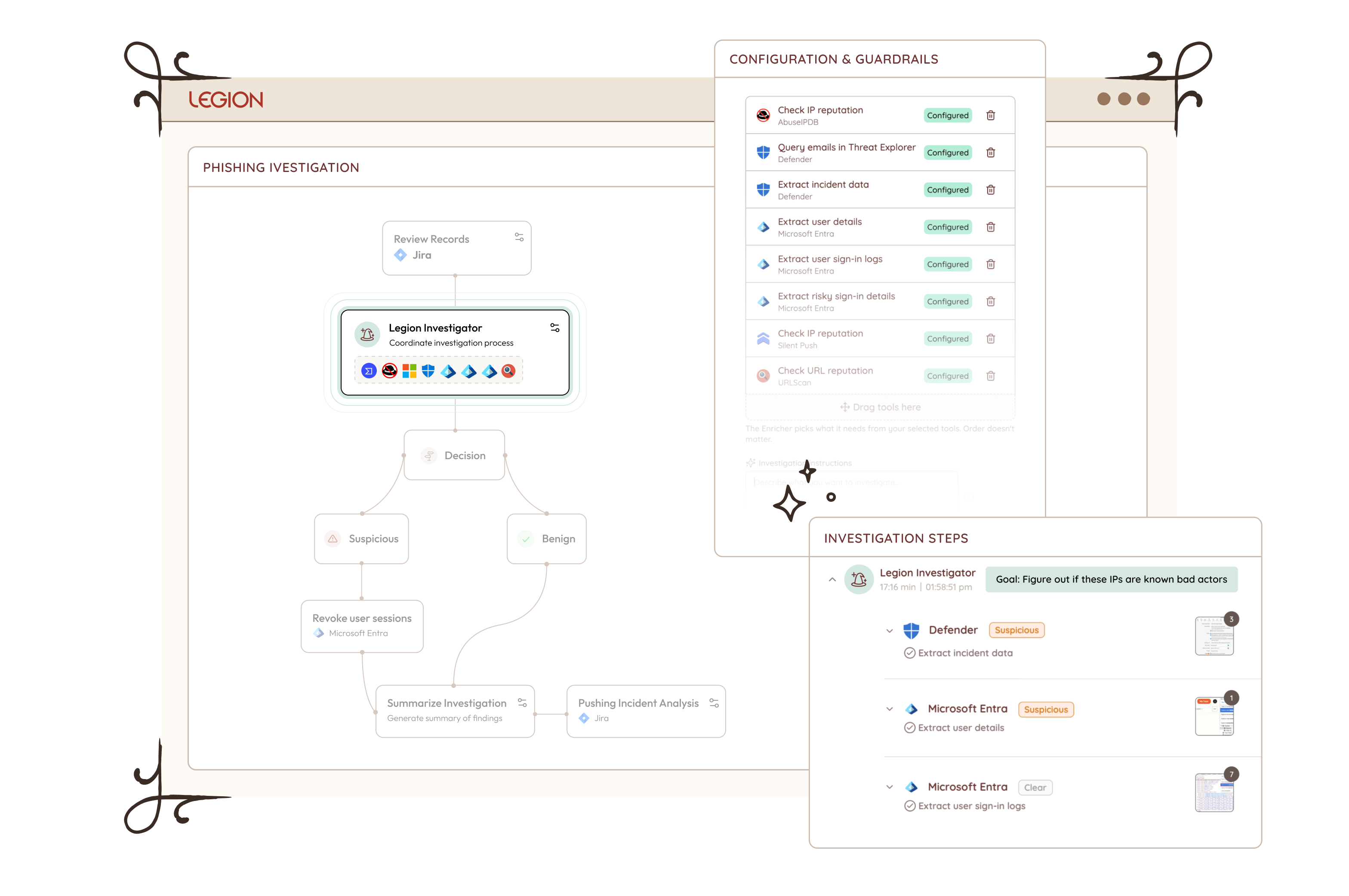

Here is an example workflow diagram:

Quality Control

An expert cybersecurity analyst annotated the 196 decision examples with reasoning tags to explain the production and customer decisions, and label whether disagreements are explained by an analyst-error, mistaken reasoning by the AI or missing data / steps in the workflow.

Examples tagged with "Workflow ran correctly but missing information" or "Workflow ran incorrectly" were removed from the dataset. Two additional examples with the use case titled "Workshop" were removed, as these were mock runs. For the remaining examples, the workflow ran correctly and was not missing information.

Triage Decision Distribution

By Label

Across the filtered dataset, the workflows contained 27 distinct normalized decision labels, which we grouped into the following buckets: False Positive, True Positive, Requires Review, and Other. The distribution of the labels is shown below:

The final evaluation dataset contains data from eight customers. The table below shows the number of annotated decision examples per customer and the tools used in each environment.

Use Case Distribution

We consolidated the use cases into 3 categories to consolidate our findings. Below is the map from the consolidated categories to the original use cases, as well as the distribution of the dataset over the consolidated categories.

Confusion Matrix

Below is a confusion matrix between the expert analyst annotations and the recommendations our system makes. We prompt the models to be careful and escalate when they are not sure.

Results

Over all use cases (including those without a use case name), Gemini 3 Pro had the highest performance at 74.8%, with GPT-4.1 and Opus 4.5 tied for second.

Phishing Results:

On the phishing use cases, Gemini 3 Pro performed the best, followed by Opus 4.5.

Account Takeover Results:

Sonnet 4 and GPT-4.1 were tied for best on the account takeover use cases.

Network Results:

Opus 4.5 and GPT-4.1 were tied for best on the network use cases.

Conclusion & Recommendation

We gathered and annotated 163 triage decisions from real-world security investigations. We characterized the use case distribution, and grouped the use cases according to common categories. We then benchmarked large language models across each use case category and the full dataset. We found that Gemini 3 Pro performs best overall. Per use case category, Gemini 3 Pro gives the best performance on phishing, Sonnet 4 and GPT-4.1 are tied for best on account takeover, and Opus 4.5 and GPT-4.1 are tied for best on network. Based on our results, we recommend that security teams test models for different scenarios to find the solution that works best for their use case, different models are good at different things and the only way to know which model works best for your use-cases it to run formal evaluation - or, you can trust us! Our research team in Legion is constantly evaluating new models and improvements to our triage pipelines.

Abstract & Data Summary

We gathered and manually annotated a dataset of 196 hard triage decisions from real-world security investigations, covering a wide range of outcomes, including benign, malicious, and false positives. After cleaning the dataset by removing mock runs and cases with missing information or incorrect workflow execution, the remaining 163 examples were grouped into use case categories to form a high-quality cohort. We then evaluated LLMs on the dataset overall and per use-case category and found that Gemini 3 Pro performs best overall, though the best LLM varies by use case category.

Model performance by use case category:

If you’d like to understand our full research methodology, read on.

*Note: since this blog was authored, several new model families have been released. While the results have remained broadly stable, particularly among the best and worst performers, updated research may be required for a nuanced understanding of the performance differences amongst the rest.

Data Collection

The dataset was constructed from security investigations from eight US-based customers.The evaluation is conducted in a secure, federated way, without mixing customer data, only reporting summary statistics from each customer tenant.

To create a challenging evaluation, we over-weighted cases in which the analyst dis-agreed with the model - so the error rate is inflated here.

The investigations were conducted automatically according to predefined, customer-specific workflows, each of which contained at least one triage decision node. A triage decision node is a decision point within a workflow, where an LLM chooses a decision from among a list of provided decision options, given the information that was gathered in the workflow up until that point.

At each decision node, the LLM used in production selected a classification decision from a list of workflow-specific decision options and provided the reasoning for its decision, based on a summary of the steps completed until that point in the investigation.

For each investigation containing at least one decision node, we collected the following information from production session logs:

- A summary of the workflow steps up until the decision node, including tool name, step description, and step outputs

- Organization-specific knowledge, written by the customer and containing a title, description, and data

- The set of available decision options at the decision node

- The model's selected decision in production, as well as the reasoning and detailed reasoning for the decision

- The decision option selected by the customer

- Feedback text written by the customer for the decision

Here is an example workflow diagram:

Quality Control

An expert cybersecurity analyst annotated the 196 decision examples with reasoning tags to explain the production and customer decisions, and label whether disagreements are explained by an analyst-error, mistaken reasoning by the AI or missing data / steps in the workflow.

Examples tagged with "Workflow ran correctly but missing information" or "Workflow ran incorrectly" were removed from the dataset. Two additional examples with the use case titled "Workshop" were removed, as these were mock runs. For the remaining examples, the workflow ran correctly and was not missing information.

Triage Decision Distribution

By Label

Across the filtered dataset, the workflows contained 27 distinct normalized decision labels, which we grouped into the following buckets: False Positive, True Positive, Requires Review, and Other. The distribution of the labels is shown below:

The final evaluation dataset contains data from eight customers. The table below shows the number of annotated decision examples per customer and the tools used in each environment.

Use Case Distribution

We consolidated the use cases into 3 categories to consolidate our findings. Below is the map from the consolidated categories to the original use cases, as well as the distribution of the dataset over the consolidated categories.

Confusion Matrix

Below is a confusion matrix between the expert analyst annotations and the recommendations our system makes. We prompt the models to be careful and escalate when they are not sure.

Results

Over all use cases (including those without a use case name), Gemini 3 Pro had the highest performance at 74.8%, with GPT-4.1 and Opus 4.5 tied for second.

Phishing Results:

On the phishing use cases, Gemini 3 Pro performed the best, followed by Opus 4.5.

Account Takeover Results:

Sonnet 4 and GPT-4.1 were tied for best on the account takeover use cases.

Network Results:

Opus 4.5 and GPT-4.1 were tied for best on the network use cases.

Conclusion & Recommendation

We gathered and annotated 163 triage decisions from real-world security investigations. We characterized the use case distribution, and grouped the use cases according to common categories. We then benchmarked large language models across each use case category and the full dataset. We found that Gemini 3 Pro performs best overall. Per use case category, Gemini 3 Pro gives the best performance on phishing, Sonnet 4 and GPT-4.1 are tied for best on account takeover, and Opus 4.5 and GPT-4.1 are tied for best on network. Based on our results, we recommend that security teams test models for different scenarios to find the solution that works best for their use case, different models are good at different things and the only way to know which model works best for your use-cases it to run formal evaluation - or, you can trust us! Our research team in Legion is constantly evaluating new models and improvements to our triage pipelines.

We benchmarked leading LLMs on 163 real-world security triage decisions across phishing, account takeover, and network use cases. See which models performed best and why the answer depends on your use case

The security industry spent years debating when attackers would gain capabilities once out of reach — nation-state-level offensive tooling, zero-day discovery at scale, exploits built and iterated in minutes.

That gap was real. And it gave organizations the impression that the decision about which AI to bring into security operations, and how to do it right, could wait until the picture was clearer.

Mythos ended that assumption.

Not because of the model's size or strength, but because by the time Anthropic announced it, Mythos had already found thousands of high-severity vulnerabilities across every major operating system and browser in use today, without being told where to look. The decision not to release is the signal everyone was looking for.

That changes the implementation question. It was never acceptable to deploy AI badly in the SOC. Now it's not acceptable to deploy it slowly either. The organizations that will come out on top in the next 12 months are the ones that move fast and get it right, and most of the industry is about to discover that those aren't the same thing.

Level set: defenders have always been behind

The average breach lifecycle was already 258 days before AI-assisted attacks became the norm. This has nothing to do with the capabilities of analysts. Human-speed defense against machine-speed offense was always a losing equation.

Mythos-class models will almost certainly expand this breach lifecycle delta.

Most Implementations Are Getting It Wrong

87% of organizations experienced an AI-driven cyberattack in the past year. Security teams know they need AI. Most are already moving. But most implementations are failing for the same reason, and it is not the technology. It is a missing critical datapoint.

You. The context that shapes your business.

Most AI SOC tools treat every organization as interchangeable. They integrate with your SIEM, your EDR, your threat intel platforms, and assume that is enough. It is not. What determines whether AI actually works in your environment has nothing to do with the list of integrations. It is the organizational context that no integration can capture.

How is your organization structured? Where does data actually live versus where it is supposed to live? Who owns what, and how does that map to investigation and response when something goes wrong? How do escalation paths work in practice, not on paper? And critically, how do you enable the business without interrupting it?

The difference shows up clearly in practice. A heavily regulated enterprise running investigations across proprietary internal platforms looks nothing like a technology company. The organizational context that shapes every investigation, every escalation decision, and every response action is invisible to a system that only sees tool outputs.

Closing that gap is the foundational requirement that most implementations skip entirely.

Org Context Is Not a One-Time Setup

This is where most implementations fail, even when they start well.

Organizational context is not a configuration you complete on day one. Your organization is a living thing. Teams change. Tools get added. Processes evolve. New subsidiaries appear. Risk posture shifts with every acquisition, every regulatory update, every new product line the business launches.

An AI system that ingested your context six months ago and stopped learning is already drifting from your reality. It is making decisions based on an organization that no longer exists.

The right model is not a one-off ingestion. It is a continuous learning system that stays embedded in how your organization actually operates, tracks how investigations unfold, incorporates analyst feedback, and updates its understanding as your environment changes.

Not a snapshot.

A persistent model of your specific organization that evolves with it.

What Good Implementation Actually Looks Like

First, AI systems needs to understand how your organization actually operates. Not how it is documented, but how investigations really unfold, where data actually lives, and how decisions get made under pressure. The gap between what is written down and what actually happens is where most AI systems fail.

Second, that understanding cannot be static. Organizations change constantly. New teams, new tools, new processes, new risk priorities. Any system that relies on a snapshot of your environment will drift from reality and degrade over time. The AI working in your environment needs to keep learning it, not just learn it once.

Third, it needs to operate within that context, not around it. Producing technically correct outputs is not enough. The system needs to produce outcomes that are actionable within your organization as it exists today. That means working within your existing workflows, tools, and constraints without asking you to change how you operate to accommodate it.

That is the standard. Systems built around this model behave differently from the start. They do not ask organizations to adapt to them. They adapt to the organization. That distinction is where most implementations succeed or fail, and it is where the industry is slowly converging.

The Only Durable Path

The organizations getting AI right in the SOC aren't the ones with the longest integration lists or the biggest models. They're the ones that treated organizational context as the foundation rather than the afterthought, and built systems that keep learning their environment rather than freezing it in place on day one.

That is a harder implementation. It requires more from the vendor and more from the buyer. But Mythos made the timeline for getting there non-negotiable. The organizations that move fast on the wrong implementation will spend the next year rebuilding. The ones that move slowly on the right one will spend it exposed. The only durable path is moving quickly on the version that actually holds up. Systems built on continuous organizational context, deployed now rather than after the next incident, force the question.

The gap that used to buy time for deliberation is gone. What's left is the quality of the decision you make in its absence.

.png)

Mythos ended the debate on whether AI belongs in the SOC. The new question is how to deploy it right and why organizational context is the foundation most implementations skip.

Anthropic was right (and responsible) to release Mythos first to cybersecurity researchers and a select group of organizations through Project Glasswing. It is a genuinely remarkable model. And the security community should take it seriously. What is available to defenders today will be in the hands of attackers in a few months. That window is closing fast.

Mythos raises the ceiling on what AI can do in cybersecurity tasks. It discovers zero-day vulnerabilities in codebases that previous models could not find. It reverse-engineers complex systems. It constructs sophisticated, multi-path exploits at scale. The capabilities that were previously accessible only to well-funded nation-state actors can now be replicated by a far broader set of threat actors. No longer do you need teams of expert reverse engineers and months of reconnaissance.

The threat landscape is structurally shifting. We will be determined by our ability to shift our defense in kind. Quickly.

Where AI in defense needs to go first

The industry is converging, rightly, on vulnerability research and remediation as the priority. Scanning your own codebase with the same class of models that attackers are using is a clear first step. In many cases, defenders actually have an asymmetric advantage here, as we have better access to our own code than attackers do.

The harder problem is remediation. We already carry significant backlogs of unresolved, sometimes exploitable, vulnerabilities. Unlike an attacker who has nothing to lose, defenders cannot afford mistakes. Our systems are in production. Downtime has real costs. The asymmetry of attacker agility versus defender accountability is where the gap widens.

AI-assisted vulnerability remediation at scale is necessary. But it is not a solved problem, and any honest assessment of the landscape has to acknowledge that.

What this means for security operations

The idea of static detections designed to discover dynamic adversaries is fundamentally misguided. The future is better trip wires and an assume-breach mentality.

For SOC teams, the implications are direct. The scale and complexity of attacks is accelerating. We should expect a higher volume of sophisticated attacks that actively evade detection, that do not conform to known signatures or behavioral patterns, and that are designed from the ground up to stay invisible.

This breaks the model that most SOC programs are built on. The idea of maintaining a library of static detections to catch dynamic adversaries has always had limits. Those limits are now being exposed in real time.

What we need instead is the ability to detect a high volume of low-fidelity signals, such as anomalies in endpoint behavior, data access patterns, email activity, network flows, and identity. This requires teams to investigate each one as if it were the leading edge of a sophisticated breach. Not because every alert is a nation-state intrusion, but rather, we should expect that a higher percentage now may be.

The question is no longer whether to adopt AI in security operations. This is clearly needed. We cannot scale defenses solely on human labor.

The question is how to do it in a way that actually works inside the operational reality that security teams live in.

The real challenge is operational reality

Enterprises have legacy and custom tools, established processes, compliance and audit requirements, escalation paths, and oversight obligations that are not optional. AI cannot simply replace this infrastructure. It has to work within it.

You cannot properly scale your defenses without giving AI access to your organizational context, including your tools, your processes, your detection logic, and your escalation criteria. AI agents need to be able to investigate with the consistency and rigor of an incredible IR analyst, operate transparently, and support human oversight at the points where it matters.

This is precisely what we built Legion to do: meet organizations where they are. Our platform learns your existing tools, processes and context and makes them accessible to the latest frontier models (now Mythos, and every model in the future). From that we create structured, repeatable workflows where consistency is required or fully agentic investigations that require depth and judgment. Every action is auditable. Human-in-the-loop controls are configurable. And the system integrates across your entire stack.

My conclusion - Assume breach, investigate everything, build for the attacker that has already found the vulnerability you have not patched yet, and is using Mythos-level models to stay ahead of your detections.

In the wake of Mythos and Project Glasswing, security operations teams need AI that meets them where they are.

Picture a senior analyst mid-investigation. Eight browser tabs open across CrowdStrike, VirusTotal, Defender, and Microsoft Entra. She's running a hunting query in one window, checking an IP reputation score in another. And somewhere in between, she's documenting. Taking screenshots, copying log entries into a case note, capturing context before it slips away.

This is the job. Investigations today aren't just about finding the threat. They're about moving across tools, pulling together evidence from a dozen different sources, and building a record that another analyst, or an auditor, or a manager, can actually follow. The documentation isn't a distraction from the work. It is part of the work.

Everyone in security has lived that.

Which raises a question that's been easy to ignore until now: if we wouldn't accept an analyst who said "trust me, I looked at it"- why are we accepting that from AI agents?

Evidence Has Always Been the Standard

The reason SOC analysts document isn't distrust. It's precision. A good investigation has always meant showing your work. The summary an analyst writes is their claim, the insight they've drawn from what they saw. The screenshot is the fact. Undisputable evidence, captured at the moment of discovery. Together they tell the full story: here is what I found, and here is the proof.

.png)

Evidence gathering has always been a core part of the job. Screenshots and logs aren't bureaucratic overhead. They're how you distinguish signal from noise, how you close out audit findings, how you hand off a case without losing context.

You Wouldn't Accept "Trust Me" From an Analyst. Stop Accepting It From AI

We hold human analysts to a clear standard. When an analyst closes a case, we expect to see their work. The exact screen they reviewed, the exact query they ran, the exact result that informed their decision. A summary of what they found is a claim. The screenshot is the proof.

We should hold AI agents to the same standard.

Today, most AI SOC give you a verdict and a reason. The agent processed the alert, evaluated the indicators, and concluded it was malicious. But if you ask what it actually saw, you're directed to API logs and structured JSON responses. That's not evidence. That's a reconstruction built after the fact, from data that was never meant to be read by a human auditor in the first place.

The gap between what an AI agent did and what you can actually verify is where hallucination risk lives. A summary can sound confident and still be wrong. Without visual evidence captured at the moment of the decision, you have no way to know what the system actually encountered.

Legion operates differently. Instead of calling APIs, Legion navigates your source systems directly through the browser, the same way a human analyst would. It opens the actual system, reads the actual screen, and captures a screenshot of exactly what it sees at every step. The summary is the claim. The screenshot is the fact.

That's the standard we believe AI investigations should meet. And it's the only architecture that meets it.

How Legion Automates Evidence Gathering

Legion Evidence Gathering captures visual proof of every action Legion takes as it navigates your source systems, automatically, in real time.

.png)

Take a malware investigation spanning CrowdStrike, VirusTotal, and Defender. Legion opens the originating ticket, reads the case, and begins investigating. As it moves through each tool, it takes a screenshot at every step. The CrowdStrike detection page as it appeared. The VirusTotal result in context. The Defender hunting query and its output. Every interface, exactly as Legion saw it.

By the time an analyst opens the case, the full evidence gallery is already there. Screenshots organized sequentially, labeled by tool, timestamped, and ready to review. Not just a summary. Not just a log. The complete picture: the analysis and the visual evidence behind every conclusion.

And it stays there. Every investigation Legion runs is stored and searchable. When an auditor asks a question, when a peer analyst picks up a handoff, when someone needs to understand why a decision was made, you go back to the session and everything is right there. Every step. Every screen. Nothing reconstructed. Nothing missing.

Different alert types. Different toolchains. The same complete evidence gallery, every time.

This Is What Accountable AI Looks Like

We've always known what a good investigation looks like. You show your work. You back your conclusions with evidence. You leave a record that someone else can follow. Legion applies that same standard to every automated investigation it runs, without exception and without manual effort. The bar doesn't move because the analyst is an AI. It stays exactly where it's always been.

See Legion Evidence Gathering in action. Request a Demo

.png)

Legion automates evidence gathering during AI-driven investigations, capturing screenshots from live security tools at every step, so every conclusion is backed by visual proof.

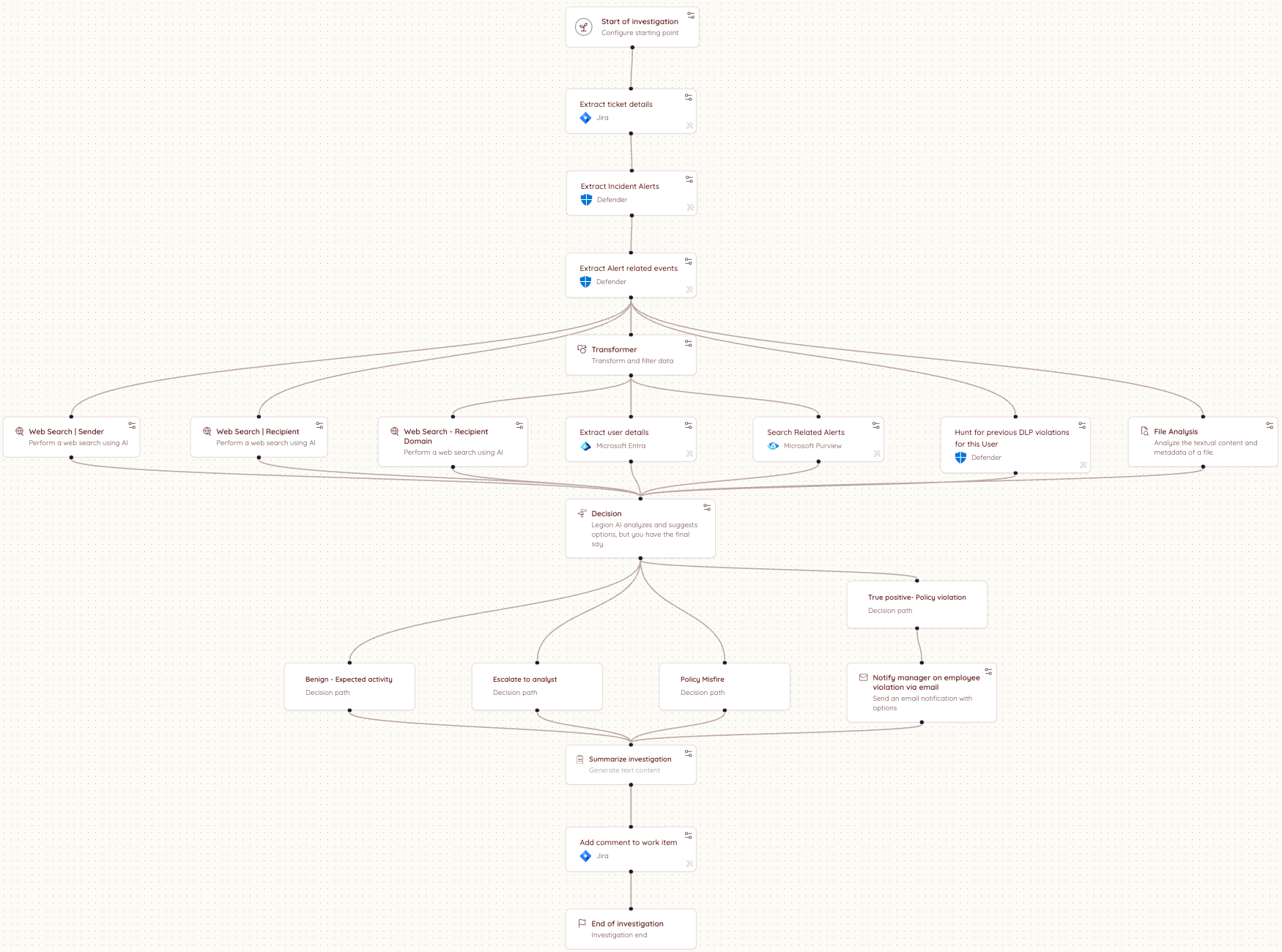

SOC investigations range widely. Some are highly repeatable: every step defined, every decision documented. These work well and can be fully automated. But some investigations eventually reach a point where that breaks down: where the next step depends on what you just found, and the judgment and intuition to know what it means.

You can see it clearly the moment you try to write it down. Some processes flow neatly from start to finish. But as soon as you move into more complex investigations, the cracks appear. You find yourself pulled into a spiral of edge cases, tool variations, and fallback paths. You add branches. Then branches on branches. And after all that effort, you almost always end up in the same place: where no rule applies, and only judgment, reasoning and intuition can take you further.

The Part You Can Never Quite Capture

SOC investigations don't all look the same. Some are fully deterministic: a user notification when an outgoing email gets blocked, no reasoning required. For these, consistency matters. The same steps, the same outcome, every time. Others are the opposite: novel threats with no fixed path, no known pattern, where only experience, intuition, and judgment can tell you what to do next. And many fall somewhere in between, where you start with structure and hit a point where judgment has to take over.

But even those flows have a ceiling. Take a phishing investigation. You can document the triage steps pretty cleanly: check the sender, analyze the headers, detonate the attachment, check the URLs. That part is routine and capturable. But the moment you find something suspicious, the investigation shifts. Now you need to reason about scope: is this part of a campaign, and who else was hit? That question has no fixed answer. You might search for other emails with the same subject, but any decent campaign will vary the lures across targets, changing subjects, sender names, and payload links to evade detection. You cannot match on a single field and call it done. You need to iterate: follow one thread, see what it reveals, adjust your search, go again. You are reading the environment in real time, making judgment calls at every step based on what the last one uncovered.

Those judgment points show up on every shift, on every alert that goes beyond the routine. Someone has to reason through them in the moment, with whatever context they have, under whatever pressure exists right now. Until 3am. Until a less experienced analyst picks it up. Until alert volume means there simply isn't time to think it through properly.

That reasoning is not pre-programmed. It emerges from the finding itself. It is what a senior analyst does instinctively, and until now there has been no way to replicate it at scale. Legion Investigator is built for that moment.

Your Environment. Your Logic. Your Investigator.

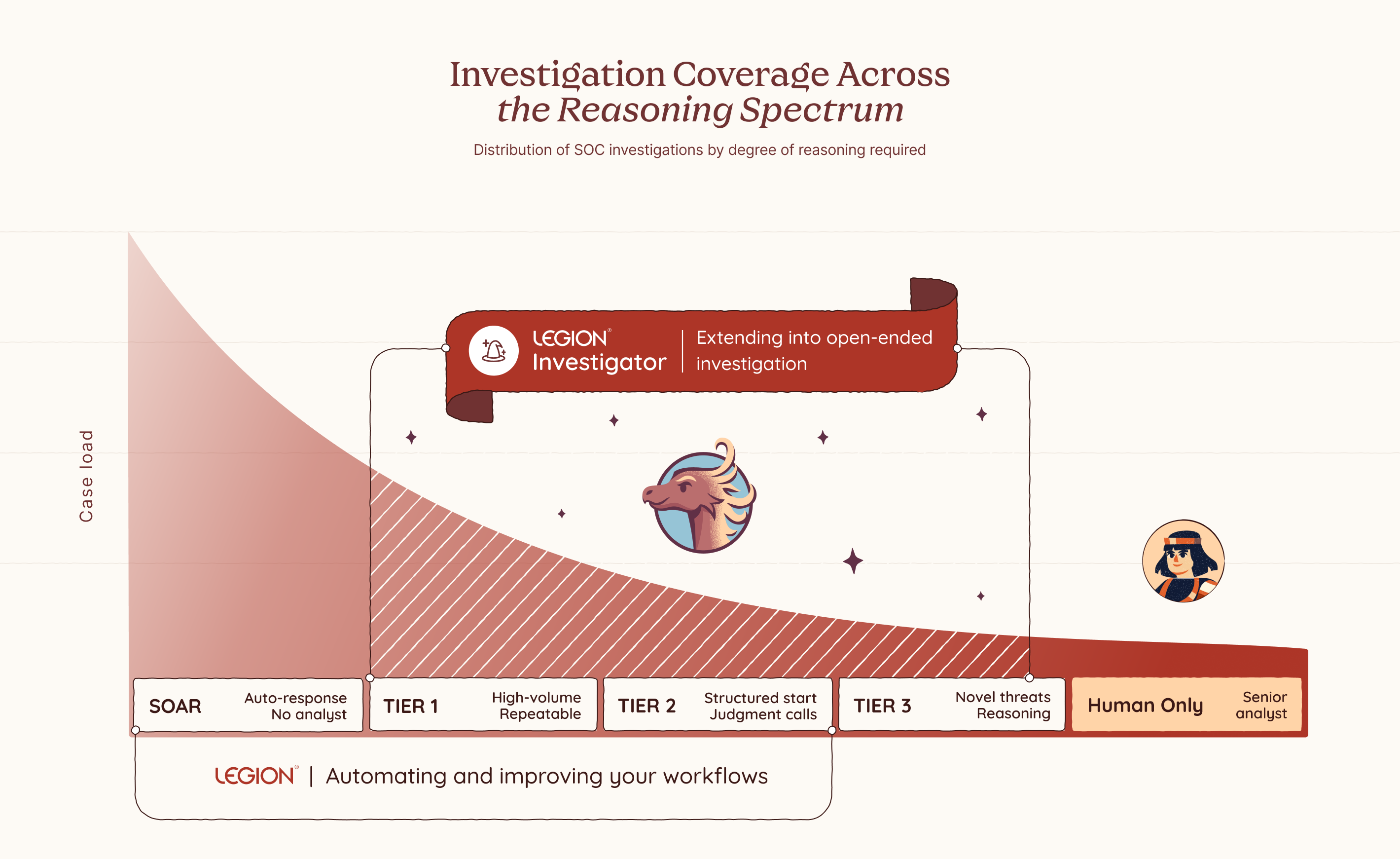

Legion Investigator is a goal-oriented AI agent that sits inside your investigation workflow at exactly the moments where reasoning takes over from execution, extending Legion's coverage across the full spectrum of SOC investigations, from fully deterministic workflows to complex open-ended investigations. You define its goal, you choose which tools and actions it is permitted to use, and you decide where it acts autonomously and where it checks in first.

Which category a given investigation falls into is sometimes obvious. But often it is a deliberate choice, one that should be yours to make based on your team's needs, your risk tolerance, and how much consistency versus flexibility the situation calls for. Where on that spectrum each investigation runs is yours to decide. Every boundary is one you set in advance and can trust will be respected. This is what makes Investigator the kind of AI enterprises can actually adopt: not just powerful, but designed from the ground up to operate within your constraints, your processes, and your level of trust.

Most AI SOC tools bring their own model of how investigations should work. Legion Investigator learns from how yours actually do. It builds its understanding from your team's recorded investigation sessions, the decisions they make, the paths they take, and the patterns that emerge across real cases in your environment. Over time, Legion builds a structured knowledge base specific to your organization, capturing your processes, your tooling, and your team's accumulated expertise. That knowledge is not just stored. It is actively used to improve your captured workflows and feeds directly into how Investigator reasons, prioritizes, and investigates.

And when we say your tools, we mean all of them. Legion Investigator works the way your analysts work, through the browser, with no integrations and no APIs required. Your SIEM, your EDR, your threat intelligence platforms, your homegrown applications, your legacy dashboards, your on-prem and cloud environments. You don’t rebuild your stack to fit the tool. The tool fits your stack.

The way it works reflects how investigations actually flow. An investigation might start in your SIEM with a set of routine queries, structured, reliable, repeatable. But when it reaches one of those decision points, you hand off to an Investigator with a goal: find the scope of breach, enrich the full context of what we have so far, identify what else was impacted across endpoints and cloud assets.

The Investigator takes that goal and works toward achieving it. It invokes the relevant tools, interprets what comes back, recalculates what to do next, and invokes again. It keeps going, step by step, until the goal is met. Not a single tool call with a result handed back to you. A full reasoning loop that runs until the work is done, across your security tools, your homegrown applications, and any AI agents already running in your environment. Investigator acts as the orchestrator, pulling in whatever is needed to get there.

Multiple Investigators can work together across a single investigation. One handles enrichment. Another determines scope of breach. A third drives containment based on what was actually found, not what was anticipated when the playbook was written.

And because trust matters, Investigator operates within guardrails. It works only with the tools and actions it’s been given permission to use. For anything higher risk, it asks before acting. You stay in control by setting the boundaries in advance and knowing they’ll be respected.

What This Changes

Legion Investigator opens up three things that weren't possible before.

Pick up where deterministic processes end

For investigations where you have structured steps, you can now embed an Investigator at exactly the points where structure runs out. The routine parts stay routine.The investigator reasons further, and by the time you step in, the groundwork is already done.

Handle your long tail of alerts

For the long tail of investigations where you never had a well-defined flow to begin with, you can now hand them off end to end. The Investigator handles enrichment before you even open the case, drives containment the moment scope is confirmed, and picks up every judgment point in between. Give the Investigator the goal, set the guardrails, and let it run. No playbook required.

Every investigation, regardless of how well-defined it is, can now be handled with the depth of your best analyst, on every alert, on every shift. And for the first time, you control where on that spectrum each investigation runs. More structure where consistency matters. More autonomy where judgment, experience, and intuition are required. The balance is yours to set, and yours to change.

This is not about replacing analysts. It never was. There will always be moments that require human judgment, experience, and instinct, and no AI should pretend otherwise. What changes is everything around those moments. The analyst becomes the commander: setting goals, defining boundaries, sending investigators out into the environment to gather, reason, and report back. The calls that matter stay with you. The work that surrounds them no longer has to. Not because we built a smarter AI. Because we built one that learned from you.

Introducing Legion AI Investigator: AI that reasons where playbooks can't. Define the goal, set the guardrails, and let it investigate across your tools — no integrations required.

I spent a long time staring at screens that couldn't keep up. Not because the analysts weren't good, but because the volume, the speed, the sheer relentlessness of what we were defending against had already outpaced the model. Tier 1 is working a queue. Tier 2 is doing the same thing, slower with more context. Tier 3 is getting pulled into fires before they finish the last one. Humans are trying to move at machine speed. It never worked. We just found ways to cope with it not working.

On March 6th, the White House said it out loud. It states directly that the administration will "rapidly adopt and promote agentic AI in ways that securely scale network defense." It calls for AI-powered cybersecurity solutions to defend federal networks and deter intrusions at scale. It frames the cyber workforce not as the primary defense mechanism, but as the strategic asset that designs and deploys the systems that do the actual defending.

That is not subtle. That is a pivot.

I've seen a lot of strategy documents come and go. Most of them describe the problem correctly and then propose solutions that require the same broken model to execute them. More analysts. More tools. More compliance frameworks that generate reports nobody reads. This one is different in a specific way. It acknowledges that human-speed defense has a ceiling, and the adversary has already blown past it.

This matters operationally. Not because government mandates translate directly to enterprise practice, but because the logic behind the mandate is undeniable and most organizations are about two or three incidents away from being forced to confront it themselves.

Here is what I actually read in that document when I strip away the political framing:

Threat actors are using AI to accelerate attack timelines and broaden their operational surface area. The gap between when something happens and when a human analyst understands what happened is widening. That gap is where organizations get compromised. The strategy is essentially acknowledging that the only viable response to AI-accelerated offense is AI-accelerated defense. Not AI-assisted. Not AI-augmented. AI that acts.

That is exactly what we built Legion to do.

Not because we read the strategy. Because we lived through the alternative. I've watched skilled analysts spend the first forty minutes of an investigation just gathering context. Pulling logs from one tool, cross-referencing with another, chasing an IP through three different platforms before they can even form a hypothesis. That is not a people problem. That is a workflow problem. And it compounds at scale until your senior analysts are doing glorified data retrieval and your tier 1 analysts are drowning in volume they were never equipped to handle alone.

Legion treats that problem directly. It captures how experienced analysts actually investigate, the sequences, the correlations, the judgment calls, and runs those workflows autonomously at the speed the threat environment requires. Not replacing the analyst, but removing the friction that slows the analyst. Campaign hunting, alert triage, IOC blocking, CVE impact assessment across your entire environment, running while your team focuses on what actually requires human judgment.

The strategy also makes a point worth taking seriously. Deploying autonomous AI in your environment without understanding what it's doing is not security. It's a different kind of exposure. The document calls for securing the entire AI technology stack, and that is not bureaucratic language. That is operational reality. Any organization rushing to adopt agentic capabilities without visibility into how those agents operate and what they can access has traded one risk for another.

The teams I respect are the ones asking both questions at the same time. How do we move at machine speed? And how do we maintain accountability over the systems doing it?

The strategy just told you where the industry is going. The question is whether your operations are positioned to keep pace with it, or whether you're still trying to scale a model that was already failing before the AI arms race began.

I know which answer I kept seeing at 3 am.

The White House just pivoted: human-speed cybersecurity has reached its ceiling. Discover why the shift to agentic AI is no longer optional and how Legion is bridging the gap between machine-speed threats and human-scale defense.

Security leaders often talk about the cost of hiring analysts. Salaries, benefits, training budgets, and a recruiter or two. Those numbers are simple to track, so they tend to dominate planning conversations. The reality inside every SOC is very different. The real costs do not show up neatly in a spreadsheet. They accumulate in the gaps between processes, in the repetitive tasks analysts cannot avoid, and in the institutional drag created when people burn out or walk out the door.

Most SOCs are not struggling with a talent shortage. They are struggling with talent waste. Skilled people spend too much time on work that is beneath their capabilities. The hard truth is that this is a design problem, not a staffing problem. Until SOCs address it head-on, the cycle repeats itself: more hiring, more turnover, more loss of knowledge, more missed opportunities.

This is the part of the SOC budget most leaders still underestimate.

The Real Cost of Hiring and Ramp-Up

Hiring an analyst feels like progress. It also comes with costs that rarely get accounted for. The first few months of a new hire can be more expensive than the hire itself. Senior analysts are pulled away from active investigations to train newcomers. Work slows down. Processes become inconsistent.

One customer summarized the issue clearly: “Most of our onboarding time goes into walking new analysts through the same basic steps. If we could guide them through those workflows with Legion, our team could focus their time on real investigations.”

When experienced analysts spend their days teaching repetitive steps instead of improving detection quality or strengthening defenses, the SOC loses far more than money. It loses momentum. And momentum is what allows a team to stay ahead of attackers.

Repetitive, Boring Work Creates Predictable Burnout

Tier 1 and Tier 2 analysts often do not quit because the mission is uninspiring. They quit because the tasks are. Every SOC leader knows this, but very few have solved it. The daily flood of low complexity alerts, routine enrichment steps, and copy-and-paste investigations grinds people down.

Burnout is not a mystery. It is the predictable result of asking talented people to repeat the same low-value tasks.

When people leave, you lose more than a seat. You lose context, intuition, and the fundamental knowledge that comes from long-term exposure to your environment. Hiring someone new does not replace that.

The Opportunity Cost That Quietly Slows Every SOC

In many SOCs, highly skilled analysts spend their time on tasks that could have been automated five years ago. This is the least visible and most expensive form of waste. It does not show up as a line item in the budget. It shows up in everything the team is not doing.

A customer of ours captured the thinking many teams share:

When analysts are busy with manual steps, they are not threat hunting, tuning detection rules, studying new adversary behaviors, or improving processes.

This is how SOCs fall behind. Not because the analysts are incapable, but because their time is misallocated. Attackers innovate faster than teams can adjust. That gap widens when analysts are stuck doing repetitive tasks rather than strategic work.

A Better Path: Give Analysts the Power to Automate Their Own Work

SOCs have tried to fix these problems by hiring more people. That has not worked. They have tried building automation through security engineering teams. That added new bottlenecks. They have tried to hire outsourced help, it created inconsistency, while decreasing visibility.

What works, and what the most forward-thinking SOCs are now adopting, is a different approach. Automation belongs with the analysts, not with developers or specialized engineers.

One analyst put it simply: “We are bringing the ability to automate to the analyst. It is about self-empowerment.”

When analysts can automate the steps they repeat every day, they stop depending on engineering cycles. They stop waiting for API integrations. They no longer need someone with Python skills to script the basics.

This shift changes the entire rhythm of the SOC.

The Role of AI SOC in Quality and Consistency

For years, automation required an engineering mindset. Tools demanded scripting, manual API work, and knowledge of multiple integrations. Analysts were forced to rely on others. As a result, automation never became widespread.

That reality is changing. Browser-based tools like Legion can now capture workflows directly from the analyst’s actions. No API configuration. No scripts. No custom requests. Analysts can drag and drop steps, adjust logic, or describe edits in natural language.

A customer of ours said it plainly:

This matters because it removes the old automation bottleneck. It lets analysts fix their own inefficiencies as soon as they see them.

Turning Senior Expertise into a Force Multiplier

A SOC becomes stronger when its best analysts teach others how they think. Historically, this type of knowledge transfer was slow and informal. New hires watched over shoulders. Senior staff answered endless questions. Training varied widely depending on who happened to be available.

Now teams record their own best work and turn it into reusable, repeatable workflows.

One analyst described the shift: “Senior people record their workflows and junior people run them. You share expertise and bring everyone to the level of your top people.”

Another added: “It is a useful training tool because junior folks can see what the investigation looks like and understand the decision-making in each step.”

This approach does more than speed up onboarding. It locks valuable expertise into the system so it can be reused at any time.

Real Results: More Output With the Team You Already Have

When repetitive work is automated, analysts suddenly have time. This is where the economic impact becomes impossible to ignore.

One organization measured the difference:

Another organization brought an entire outsourced SOC back in-house. Their automation results gave them enough capacity and quality improvements to cancel a seven-figure managed services contract. The CISO wanted consistent quality. The SOC manager wanted efficiency. Legion delivered both.

The manager became the hero of the story because he did not ask for more people. He made better use of the ones he already had.

Where to Begin If You Want to Reduce These Hidden Costs

You do not need a complete transformation plan to get started. Most SOCs can begin reducing waste immediately by focusing on a few straightforward steps.

1. Identify high-frequency workflows: Look for anything repetitive, especially tasks that happen dozens of times per day.

2. Ask analysts to document their steps: This becomes the foundation for automation and reveals inconsistencies. We do this at Legion through a simple recording process.

3. Build automation for the repetitive use cases: Let analysts automate on their own without developers. This creates speed and value for repetitive work.

4. Track real metrics: MTTI/MTTR, MTTA (Acknowledgement), onboarding time (a time to value metric), and workflow usage

5. Encourage a culture of sharing: When people share workflows, the entire team improves faster. There are almost always steps that differ between analysts.

Small shifts compound quickly. Capacity increases. Quality rises. Analysts feel more ownership and less drain.

The SOC of the Future Makes Better Use of Human Talent

The SOCs that succeed over the next decade will not be the ones that hire the most people. They will be the ones who make the smartest use of the people they already have.

When you eliminate the hidden costs, you unlock the real value of your team. Human judgment, intuition, and creativity become the focus again. That is the work analysts want to do. And it is the work that actually strengthens your defenses.

Most SOCs are not struggling with a talent shortage. They are struggling with talent waste. Learn how Legion is helping enterprises solve the SOC talent management crisis.

At Legion, we spend as much time thinking about how we build as we do about what we build. Our engineering culture shapes every decision, every feature, and every customer interaction.

This isn’t a manifesto or a slide in a company deck. It’s a candid look at how our team actually works today, what we care about, and the kind of engineers who tend to thrive here.

We build around four core ideas: Trust, Speed, Customer Obsession, and Curiosity. The rest flows from there.

1. High Ownership, Zero Silos

The foundation of engineering at Legion is simple: we trust you, and you own what you build.

We don’t treat engineering like an assembly line. Every engineer here runs the full loop:

- Shaping the problem and the solution

- Designing and implementing backend, frontend, and AI pieces

- Getting features into production

- Watching how customers actually use what you shipped

That level of ownership creates accountability, but it also creates pride. You see the full impact of your work.

However, ownership doesn’t mean you’re on your own. We don’t build in silos. We are a team that constantly supports each other, whether that’s brainstorming a solution, helping a teammate get unblocked, or just acting as a sounding board.

Part of owning your work is bringing the team along with you. It means communicating your plan and ensuring everyone is aligned on how your work fits into the bigger picture. Collaboration isn't just a process here; it's how we succeed. You own the outcome, but you have the whole team behind you.

Trust is what makes this possible. We don’t track hours or measure success by time spent at a desk. People have kids, partners, lives, good days, and off days. What matters is that we deliver great work and move the product forward. How you organize your time to do that is up to you.

2. Speed Wins (And Responsiveness Matters)

We care a lot about speed, but not the chaotic, “everything is a fire drill” version.

Speed for us means short feedback loops, small and frequent releases, and fixing issues quickly when they appear.

When a customer hits a bug or something breaks, that becomes our priority. We stop, understand the problem, fix it, and close the loop. A quick, thoughtful fix often does more to build trust than a big new feature.

On the feature side, we favor progress over perfection. We’d rather ship a smaller version this week, watch how customers react, and iterate, rather than spend months polishing something in isolation.

Speed doesn’t mean cutting corners. It means learning fast and moving forward with intention. If you like seeing your work in production quickly, and you’re comfortable with the responsibility that comes with that, you’ll fit in well.

3. Customer-Obsessed: Building What They Actually Need

It’s easy for engineering teams to get lost in the code and forget the human on the other side of the screen. We fight hard against that.

We are obsessed with building features that genuinely help our customers, not just features that are fun to code. To do that, we stay close to them. We make a point of hearing directly from users, not just to fix bugs, but to understand the reality of their work and what they truly need to make it easier.

That direct connection builds empathy. It helps us understand why we are building a feature, not just how to implement it. This ensures we don’t waste cycles building things nobody wants. When you understand the core problem, you build a better product, one that delivers real value from day one.

4. Curiosity: We Build for What’s Next

AI is at the center of everything we do at Legion, and that means working in a landscape that changes every week.

We can’t afford to be comfortable with the tools we used last year. We look for engineers who are genuinely curious, the kind of people who play with new models just to see what they can do.

We proactively invest time in emerging technology, knowing that early experimentation is how we define the next industry standard. If you prefer a job where the tech stack never changes, and the roadmap is set in stone for 18 months, you probably won’t enjoy it here. But if you love the chaos of innovation and figuring out how to apply new tech to real security problems, you’ll fit right in.

So, is this for you?

Ultimately, we are trying to build the kind of team we’d want to work in ourselves.

It’s an environment that tries to balance the energy of collaboration in our Tel Aviv office with the quiet focus needed for deep work at home. We try to keep things simple: we are candid with each other, and we value getting our hands dirty over managing processes.

If you want to be part of a team where you are trusted to own your work and move fast, come talk to us. Let’s build something great together.

VP of R&D Michael Gladishev breaks down how the team works, why curiosity drives everything, and what kind of engineers thrive in a zero-ego, high-ownership environment.

The first publicly documented, large-scale AI-orchestrated cyber-espionage campaign is now out in the open. Anthropic disclosed that threat actors (assessed with high confidence as a Chinese state-sponsored group) misused Claude Code to run the bulk of an intrusion targeting roughly 30 global organizations across tech, finance, chemical manufacturing, and government.

This attack should serve as a wake-up call, not because of what it is, but because of what it enables. The attackers used written scripts and known vulnerabilities, with AI primarily acting as an orchestration and reconnaissance layer; a "script kiddy" rather than a fully autonomous hacker. This is just the start.

In the near future, the capabilities demonstrated here will rapidly accelerate. We can expect to see actual malware that writes itself, finds and exploits vulnerabilities on the fly, and evades defenses in smart, adaptive ways. This shift means that the assumptions guiding SOC teams are changing.

What Actually Happened: The Technical Anatomy

The most critical takeaway from this campaign is not the technology used, but the level of trust the attackers placed in the AI. By trusting the model to carry out complex, multi-stage operations without human intervention, they unlocked significant, scalable capabilities far beyond human tempo.

1. Attackers “Jailbroke” the Model

Claude’s safeguards weren’t broken with a single jailbreak prompt. The actors decomposed malicious tasks into small, plausible “red-team testing” requests. The model believed it was legitimately supporting a pentest workflow. This matters because it shows that attackers don’t need to “break” an LLM. They just need to redirect its context and trust it to complete the mission.

2. AI Performed the Operational Heavy Lifting

The attackers trusted Claude Code to execute the campaign in an agentic chain autonomously:

- Scanning for exposed surfaces

- Enumerating systems and sensitive databases

- Writing and iterating exploit code

- Harvesting credentials and moving laterally

- Packaging and exfiltrating data

Humans stepped in only at a few critical junctures, mainly to validate targets, approve next steps, or correct the agent when it hallucinated. The bulk of the execution was delegated, demonstrating the attackers’ trust in the AI’s consistency and thoroughness.

3. Scale and Tempo Were Beyond Human Patterns

The agent fired thousands of requests. Traditional SOC playbooks and anomaly models assume slower human-driven actions, distinct operator fingerprints, and pauses due to errors or tool switching. Agentic AI has none of those constraints. The campaign demonstrated a tempo and scale that is only possible when the human operator takes a massive step back and trusts the machine to work at machine speed.

4. Anthropic Detected It and Shut It Down

Anthropic’s logs flagged abnormal usage patterns, disabled accounts, alerted impacted organizations, worked with governments, and released a technical breakdown of how the AI was misused.

The Defender’s Mandate: Adopt and Trust Defensive AI

Attackers have already made the mental pivot, treating AI as a trusted, high-velocity force multiplier for offense. Defenders must meet this shift head-on. If you don't adopt defensive AI, you are falling behind adversaries who already have.

Defenders must further adopt AI and trust it to carry out workflows where it has a decisive advantage: consistency, thoroughness, speed, and scale.

1. Attack Velocity Requires Machine Speed Defense

When an agent can operate at 50–200x human tempo, your detection assumptions rot fast. SOC teams need to treat AI-driven intrusion patterns as high-frequency anomalies, not human-like sequences.

2. Trust AI for High-Volume, Deterministic Workflows

Existing detection pipelines tuned on human patterns will miss sub-second sequential operations, machine-generated payload variants, and coordinated micro-actions. Agentic workloads look more like automation platforms than human operators.

Defenders need to accept the uncomfortable reality that manual triage for these types of intrusions is pointless. You need systems that can sift through massive alert loads, isolate and contain suspicious agentic behavior as it unfolds.

This is where the defense’s trust must be applied. Only the genuinely complex cases should ever reach a human. The SOC must delegate and trust AI to handle triage, investigation, and response with machine-like consistency.

3. “AI vs. AI” is No Longer Theoretical

Attackers have already made the mental pivot: AI is a force multiplier for offense today. Defenders need to accept the same reality. And Anthropic said this out loud in their conclusion:

“We advise security teams to experiment with applying AI for defense in areas like SOC automation, threat detection, vulnerability assessment, and incident response.”

That’s the part most vendors avoid saying publicly, because it commits them to a position. If you don’t adopt defensive AI, you’re falling behind adversaries who already have.

Where SOC Teams Should Act Now

Build Detection for Agentic Behaviors

Start by strengthening detection around behaviors that simply don’t look human. Agentic intrusions move at a pace and rhythm that operators can’t match: rapid-fire request chains, automated tool-hopping, endless exploit-generation loops, and bursty enumeration that sweeps entire environments in seconds. Even lateral movement takes on a mechanical cadence with no hesitation.

These patterns stand out once you train your systems to look for them, but they won’t surface through traditional detection tuned for human adversaries.

Make AI a Core Strategy, Not an Experiment

Start thinking of adopting AI to fight specific offensive AI use cases, whilst keeping your human SOC on its routine.

Defenders have to meet this shift head-on and start using AI against the very tactics it enables. The volume and velocity of these intrusions make manual triage pointless.

You need systems that can sift through massive alert loads, isolate and contain suspicious agentic behavior as it unfolds, generate and evaluate countermeasures on the fly, and digest massive log streams without slowing down. Only the genuinely complex cases should ever reach a human. This isn’t aspirational thinking; attackers have already proven the model works.

Key Takeaway

For SOC teams, the takeaway is that defense has to evolve at the same pace as offense. That means treating AI as a core operational capability inside the SOC, not an experiment or a novelty.

The Defender’s AI Mandate: Trust AI to handle tasks where it excels: consistency, thoroughness, and scale.

The Defender’s AI Goal: Delegate volume and noise to defensive AI agents, freeing human analysts to focus only on genuinely complex, high-confidence threats that require strategic human judgment.

Legion Security will continue publishing analysis, defensive patterns, and applied research in this space. If you want a deeper dive into detection signatures or how to operationalize defensive AI safely, just say the word.

The Anthropic AI espionage case proves attackers trust autonomous agents. To counter machine-speed threats, SOCs must adopt and trust AI to handle 90% of the defense workload.