F5 Breach Overview & Guide for SOC Teams

The August 2025 F5 breach exposed BIG-IP source code and internal vulnerability data, giving attackers a roadmap to future exploits. Learn how SOC teams can identify exposure, secure management interfaces, patch fast, and hunt for early compromise indicators before adversaries strike.

On August 9, 2025, F5 detected that a “highly sophisticated nation-state threat actor” maintained long-term, persistent access to parts of F5’s internal network (development, engineering, and knowledge management. (ref: Rapid7/Tenable via The Hacker News)

The actor exfiltrated files from F5’s environment, including portions of the BIG-IP source code, internal data on vulnerabilities, and configuration/implementation details for a small subset of F5 customers. The breach gives adversaries the ability to identify or weaponize vulnerabilities in F5 products before general detection or patching cycles. CISA issued Emergency Directive ED 26-01, calling this an imminent threat to networks running F5 devices.

While F5 reports no evidence yet of active exploitation of undisclosed vulnerabilities or supply-chain tampering, the latent risk is very high.

Why This Matters to the SOC

Devices such as BIG-IP (and related F5 appliances/software) sit at high-value network chokepoints, including load balancing, application delivery, VPN/Edge access, and WAF. A successful exploit here can yield broad lateral reach.

The attacker now has access to source code and internal vulnerability documentation, which dramatically reduces the time and effort required for adversaries to craft bespoke zero-day exploits. Because many organisations might delay patching or have externally exposed management interfaces for F5 devices, the window for exploitation is widened.

Even though F5 says there is no evidence yet of the software build or release pipeline being tampered with, you must assume adversaries could exploit this vector in the future.

Key Assets/Systems to Focus On

Alerts/Monitoring: What to Set Up Immediately

Here are recommended alerts and monitoring rules to implement. Depending on your toolset (SIEM, EDR, NDR, device logs), tailor accordingly.

Threat-Hunting Scenarios

Recommended Immediate Steps for SOC / IR Teams

Key Intelligence Sources

- F5’s own Security Notice (KB K000154696) covering details of the incident

- CISA ED 26-01 and associated advisory for federal agencies (applicable for private sector)

- Vendor advisories & CVE list from F5 (October 2025 Quarterly Security Notification) containing patched vulnerabilities

- Threat-intelligence vendor blogs, such as Rapid7, for IOCs and detection rule updates

Takeaways

The F5 breach is more than a vendor incident. It signals a major change in how capable and prepared nation-state actors have become. By stealing source code and internal vulnerability data, attackers have gained deep insight into how F5 products are built and secured. They no longer need to spend time discovering weaknesses; they can start exploiting them.

Every unpatched or misconfigured F5 device should now be viewed as a potential target. This breach shows how critical it is to treat infrastructure software as part of your attack surface. Assume adversaries understand your systems as well as you do, if not better.

Over the next month, your focus should be clear:

- Build complete visibility into every F5 device and interface in your network.

- Isolate management access and enforce strict authentication.

- Patch aggressively and verify every update.

- Monitor continuously for configuration changes or unusual traffic.

- Hunt actively for early indicators of compromise.

This breach is a warning. Acting now, with urgency and precision, is the difference between staying ahead of that wave.

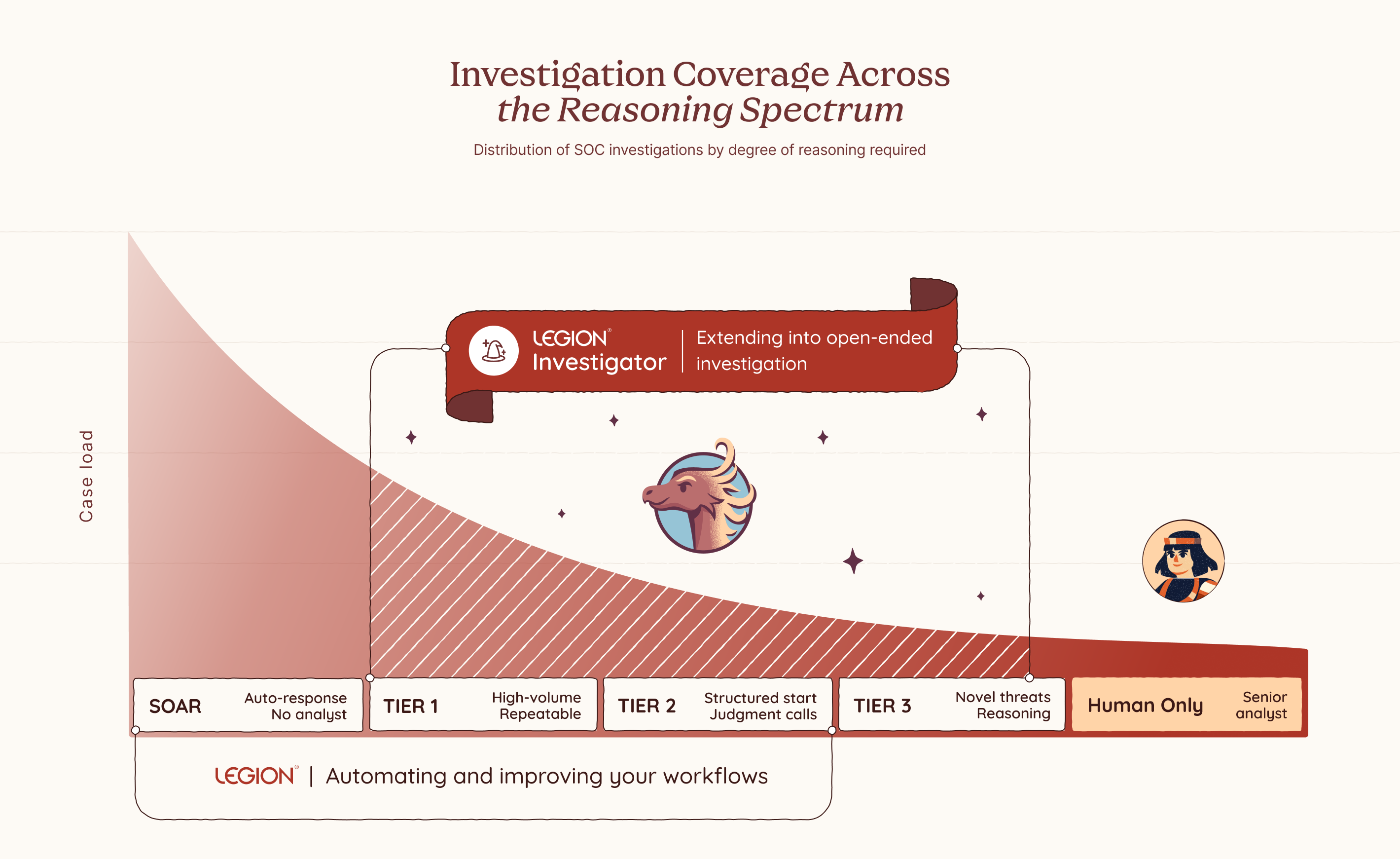

SOC investigations range widely. Some are highly repeatable: every step defined, every decision documented. These work well and can be fully automated. But some investigations eventually reach a point where that breaks down: where the next step depends on what you just found, and the judgment and intuition to know what it means.

You can see it clearly the moment you try to write it down. Some processes flow neatly from start to finish. But as soon as you move into more complex investigations, the cracks appear. You find yourself pulled into a spiral of edge cases, tool variations, and fallback paths. You add branches. Then branches on branches. And after all that effort, you almost always end up in the same place: where no rule applies, and only judgment, reasoning and intuition can take you further.

The Part You Can Never Quite Capture

SOC investigations don't all look the same. Some are fully deterministic: a user notification when an outgoing email gets blocked, no reasoning required. For these, consistency matters. The same steps, the same outcome, every time. Others are the opposite: novel threats with no fixed path, no known pattern, where only experience, intuition, and judgment can tell you what to do next. And many fall somewhere in between, where you start with structure and hit a point where judgment has to take over.

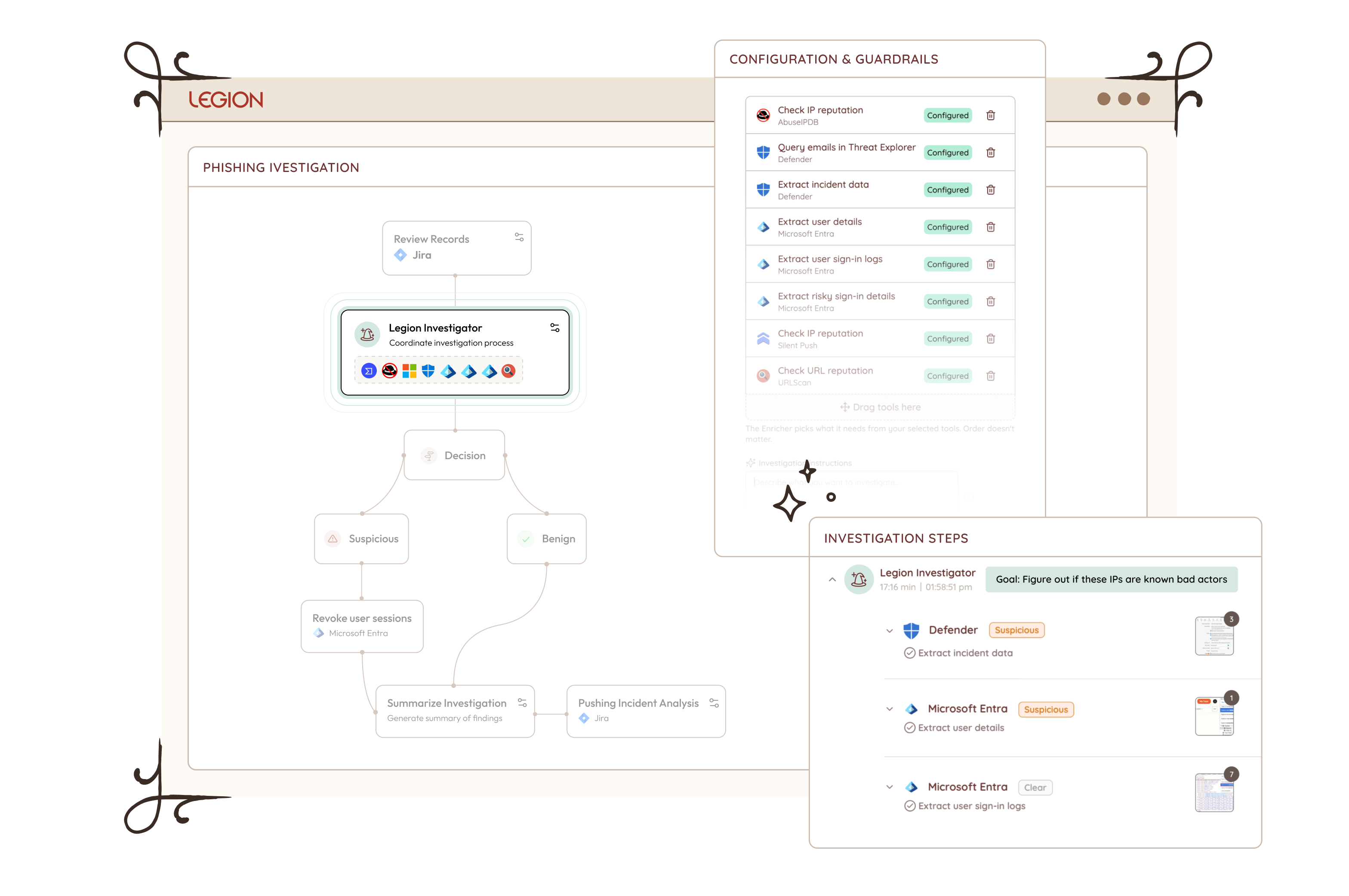

But even those flows have a ceiling. Take a phishing investigation. You can document the triage steps pretty cleanly: check the sender, analyze the headers, detonate the attachment, check the URLs. That part is routine and capturable. But the moment you find something suspicious, the investigation shifts. Now you need to reason about scope: is this part of a campaign, and who else was hit? That question has no fixed answer. You might search for other emails with the same subject, but any decent campaign will vary the lures across targets, changing subjects, sender names, and payload links to evade detection. You cannot match on a single field and call it done. You need to iterate: follow one thread, see what it reveals, adjust your search, go again. You are reading the environment in real time, making judgment calls at every step based on what the last one uncovered.

Those judgment points show up on every shift, on every alert that goes beyond the routine. Someone has to reason through them in the moment, with whatever context they have, under whatever pressure exists right now. Until 3am. Until a less experienced analyst picks it up. Until alert volume means there simply isn't time to think it through properly.

That reasoning is not pre-programmed. It emerges from the finding itself. It is what a senior analyst does instinctively, and until now there has been no way to replicate it at scale. Legion Investigator is built for that moment.

Your Environment. Your Logic. Your Investigator.

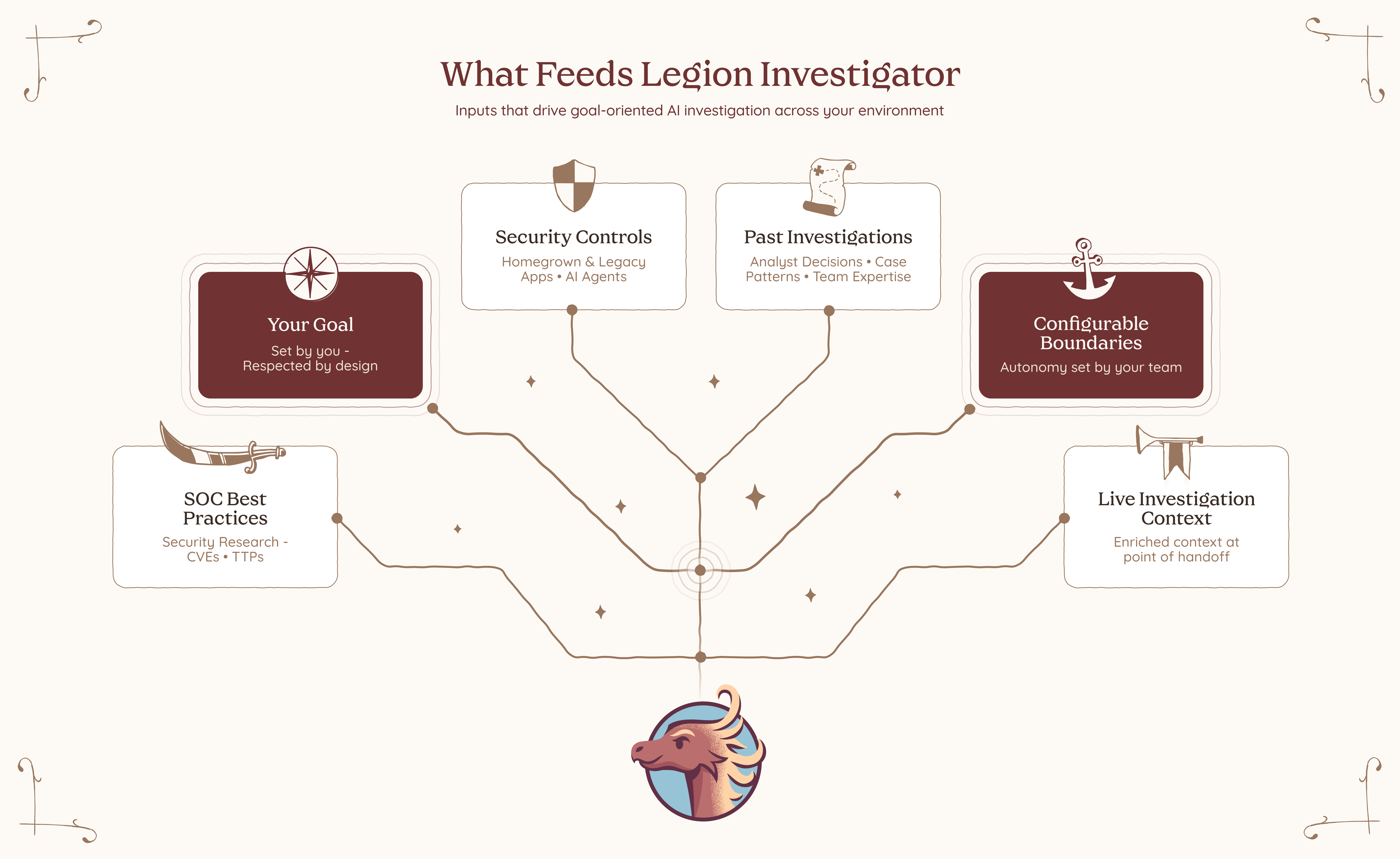

Legion Investigator is a goal-oriented AI agent that sits inside your investigation workflow at exactly the moments where reasoning takes over from execution, extending Legion's coverage across the full spectrum of SOC investigations, from fully deterministic workflows to complex open-ended investigations. You define its goal, you choose which tools and actions it is permitted to use, and you decide where it acts autonomously and where it checks in first.

Which category a given investigation falls into is sometimes obvious. But often it is a deliberate choice, one that should be yours to make based on your team's needs, your risk tolerance, and how much consistency versus flexibility the situation calls for. Where on that spectrum each investigation runs is yours to decide. Every boundary is one you set in advance and can trust will be respected. This is what makes Investigator the kind of AI enterprises can actually adopt: not just powerful, but designed from the ground up to operate within your constraints, your processes, and your level of trust.

Most AI SOC tools bring their own model of how investigations should work. Legion Investigator learns from how yours actually do. It builds its understanding from your team's recorded investigation sessions, the decisions they make, the paths they take, and the patterns that emerge across real cases in your environment. Over time, Legion builds a structured knowledge base specific to your organization, capturing your processes, your tooling, and your team's accumulated expertise. That knowledge is not just stored. It is actively used to improve your captured workflows and feeds directly into how Investigator reasons, prioritizes, and investigates.

And when we say your tools, we mean all of them. Legion Investigator works the way your analysts work, through the browser, with no integrations and no APIs required. Your SIEM, your EDR, your threat intelligence platforms, your homegrown applications, your legacy dashboards, your on-prem and cloud environments. You don’t rebuild your stack to fit the tool. The tool fits your stack.

The way it works reflects how investigations actually flow. An investigation might start in your SIEM with a set of routine queries, structured, reliable, repeatable. But when it reaches one of those decision points, you hand off to an Investigator with a goal: find the scope of breach, enrich the full context of what we have so far, identify what else was impacted across endpoints and cloud assets.

The Investigator takes that goal and works toward achieving it. It invokes the relevant tools, interprets what comes back, recalculates what to do next, and invokes again. It keeps going, step by step, until the goal is met. Not a single tool call with a result handed back to you. A full reasoning loop that runs until the work is done, across your security tools, your homegrown applications, and any AI agents already running in your environment. Investigator acts as the orchestrator, pulling in whatever is needed to get there.

Multiple Investigators can work together across a single investigation. One handles enrichment. Another determines scope of breach. A third drives containment based on what was actually found, not what was anticipated when the playbook was written.

And because trust matters, Investigator operates within guardrails. It works only with the tools and actions it’s been given permission to use. For anything higher risk, it asks before acting. You stay in control by setting the boundaries in advance and knowing they’ll be respected.

What This Changes

Legion Investigator opens up three things that weren't possible before.

Pick up where deterministic processes end

For investigations where you have structured steps, you can now embed an Investigator at exactly the points where structure runs out. The routine parts stay routine.The investigator reasons further, and by the time you step in, the groundwork is already done.

Handle your long tail of alerts

For the long tail of investigations where you never had a well-defined flow to begin with, you can now hand them off end to end. The Investigator handles enrichment before you even open the case, drives containment the moment scope is confirmed, and picks up every judgment point in between. Give the Investigator the goal, set the guardrails, and let it run. No playbook required.

Every investigation, regardless of how well-defined it is, can now be handled with the depth of your best analyst, on every alert, on every shift. And for the first time, you control where on that spectrum each investigation runs. More structure where consistency matters. More autonomy where judgment, experience, and intuition are required. The balance is yours to set, and yours to change.

This is not about replacing analysts. It never was. There will always be moments that require human judgment, experience, and instinct, and no AI should pretend otherwise. What changes is everything around those moments. The analyst becomes the commander: setting goals, defining boundaries, sending investigators out into the environment to gather, reason, and report back. The calls that matter stay with you. The work that surrounds them no longer has to. Not because we built a smarter AI. Because we built one that learned from you.

Introducing Legion AI Investigator: AI that reasons where playbooks can't. Define the goal, set the guardrails, and let it investigate across your tools — no integrations required.

I spent a long time staring at screens that couldn't keep up. Not because the analysts weren't good, but because the volume, the speed, the sheer relentlessness of what we were defending against had already outpaced the model. Tier 1 is working a queue. Tier 2 is doing the same thing, slower with more context. Tier 3 is getting pulled into fires before they finish the last one. Humans are trying to move at machine speed. It never worked. We just found ways to cope with it not working.

On March 6th, the White House said it out loud. It states directly that the administration will "rapidly adopt and promote agentic AI in ways that securely scale network defense." It calls for AI-powered cybersecurity solutions to defend federal networks and deter intrusions at scale. It frames the cyber workforce not as the primary defense mechanism, but as the strategic asset that designs and deploys the systems that do the actual defending.

That is not subtle. That is a pivot.

I've seen a lot of strategy documents come and go. Most of them describe the problem correctly and then propose solutions that require the same broken model to execute them. More analysts. More tools. More compliance frameworks that generate reports nobody reads. This one is different in a specific way. It acknowledges that human-speed defense has a ceiling, and the adversary has already blown past it.

This matters operationally. Not because government mandates translate directly to enterprise practice, but because the logic behind the mandate is undeniable and most organizations are about two or three incidents away from being forced to confront it themselves.

Here is what I actually read in that document when I strip away the political framing:

Threat actors are using AI to accelerate attack timelines and broaden their operational surface area. The gap between when something happens and when a human analyst understands what happened is widening. That gap is where organizations get compromised. The strategy is essentially acknowledging that the only viable response to AI-accelerated offense is AI-accelerated defense. Not AI-assisted. Not AI-augmented. AI that acts.

That is exactly what we built Legion to do.

Not because we read the strategy. Because we lived through the alternative. I've watched skilled analysts spend the first forty minutes of an investigation just gathering context. Pulling logs from one tool, cross-referencing with another, chasing an IP through three different platforms before they can even form a hypothesis. That is not a people problem. That is a workflow problem. And it compounds at scale until your senior analysts are doing glorified data retrieval and your tier 1 analysts are drowning in volume they were never equipped to handle alone.

Legion treats that problem directly. It captures how experienced analysts actually investigate, the sequences, the correlations, the judgment calls, and runs those workflows autonomously at the speed the threat environment requires. Not replacing the analyst, but removing the friction that slows the analyst. Campaign hunting, alert triage, IOC blocking, CVE impact assessment across your entire environment, running while your team focuses on what actually requires human judgment.

The strategy also makes a point worth taking seriously. Deploying autonomous AI in your environment without understanding what it's doing is not security. It's a different kind of exposure. The document calls for securing the entire AI technology stack, and that is not bureaucratic language. That is operational reality. Any organization rushing to adopt agentic capabilities without visibility into how those agents operate and what they can access has traded one risk for another.

The teams I respect are the ones asking both questions at the same time. How do we move at machine speed? And how do we maintain accountability over the systems doing it?

The strategy just told you where the industry is going. The question is whether your operations are positioned to keep pace with it, or whether you're still trying to scale a model that was already failing before the AI arms race began.

I know which answer I kept seeing at 3 am.

The White House just pivoted: human-speed cybersecurity has reached its ceiling. Discover why the shift to agentic AI is no longer optional and how Legion is bridging the gap between machine-speed threats and human-scale defense.

Security leaders often talk about the cost of hiring analysts. Salaries, benefits, training budgets, and a recruiter or two. Those numbers are simple to track, so they tend to dominate planning conversations. The reality inside every SOC is very different. The real costs do not show up neatly in a spreadsheet. They accumulate in the gaps between processes, in the repetitive tasks analysts cannot avoid, and in the institutional drag created when people burn out or walk out the door.

Most SOCs are not struggling with a talent shortage. They are struggling with talent waste. Skilled people spend too much time on work that is beneath their capabilities. The hard truth is that this is a design problem, not a staffing problem. Until SOCs address it head-on, the cycle repeats itself: more hiring, more turnover, more loss of knowledge, more missed opportunities.

This is the part of the SOC budget most leaders still underestimate.

The Real Cost of Hiring and Ramp-Up

Hiring an analyst feels like progress. It also comes with costs that rarely get accounted for. The first few months of a new hire can be more expensive than the hire itself. Senior analysts are pulled away from active investigations to train newcomers. Work slows down. Processes become inconsistent.

One customer summarized the issue clearly: “Most of our onboarding time goes into walking new analysts through the same basic steps. If we could guide them through those workflows with Legion, our team could focus their time on real investigations.”

When experienced analysts spend their days teaching repetitive steps instead of improving detection quality or strengthening defenses, the SOC loses far more than money. It loses momentum. And momentum is what allows a team to stay ahead of attackers.

Repetitive, Boring Work Creates Predictable Burnout

Tier 1 and Tier 2 analysts often do not quit because the mission is uninspiring. They quit because the tasks are. Every SOC leader knows this, but very few have solved it. The daily flood of low complexity alerts, routine enrichment steps, and copy-and-paste investigations grinds people down.

Burnout is not a mystery. It is the predictable result of asking talented people to repeat the same low-value tasks.

When people leave, you lose more than a seat. You lose context, intuition, and the fundamental knowledge that comes from long-term exposure to your environment. Hiring someone new does not replace that.

The Opportunity Cost That Quietly Slows Every SOC

In many SOCs, highly skilled analysts spend their time on tasks that could have been automated five years ago. This is the least visible and most expensive form of waste. It does not show up as a line item in the budget. It shows up in everything the team is not doing.

A customer of ours captured the thinking many teams share:

When analysts are busy with manual steps, they are not threat hunting, tuning detection rules, studying new adversary behaviors, or improving processes.

This is how SOCs fall behind. Not because the analysts are incapable, but because their time is misallocated. Attackers innovate faster than teams can adjust. That gap widens when analysts are stuck doing repetitive tasks rather than strategic work.

A Better Path: Give Analysts the Power to Automate Their Own Work

SOCs have tried to fix these problems by hiring more people. That has not worked. They have tried building automation through security engineering teams. That added new bottlenecks. They have tried to hire outsourced help, it created inconsistency, while decreasing visibility.

What works, and what the most forward-thinking SOCs are now adopting, is a different approach. Automation belongs with the analysts, not with developers or specialized engineers.

One analyst put it simply: “We are bringing the ability to automate to the analyst. It is about self-empowerment.”

When analysts can automate the steps they repeat every day, they stop depending on engineering cycles. They stop waiting for API integrations. They no longer need someone with Python skills to script the basics.

This shift changes the entire rhythm of the SOC.

The Role of AI SOC in Quality and Consistency

For years, automation required an engineering mindset. Tools demanded scripting, manual API work, and knowledge of multiple integrations. Analysts were forced to rely on others. As a result, automation never became widespread.

That reality is changing. Browser-based tools like Legion can now capture workflows directly from the analyst’s actions. No API configuration. No scripts. No custom requests. Analysts can drag and drop steps, adjust logic, or describe edits in natural language.

A customer of ours said it plainly:

This matters because it removes the old automation bottleneck. It lets analysts fix their own inefficiencies as soon as they see them.

Turning Senior Expertise into a Force Multiplier

A SOC becomes stronger when its best analysts teach others how they think. Historically, this type of knowledge transfer was slow and informal. New hires watched over shoulders. Senior staff answered endless questions. Training varied widely depending on who happened to be available.

Now teams record their own best work and turn it into reusable, repeatable workflows.

One analyst described the shift: “Senior people record their workflows and junior people run them. You share expertise and bring everyone to the level of your top people.”

Another added: “It is a useful training tool because junior folks can see what the investigation looks like and understand the decision-making in each step.”

This approach does more than speed up onboarding. It locks valuable expertise into the system so it can be reused at any time.

Real Results: More Output With the Team You Already Have

When repetitive work is automated, analysts suddenly have time. This is where the economic impact becomes impossible to ignore.

One organization measured the difference:

Another organization brought an entire outsourced SOC back in-house. Their automation results gave them enough capacity and quality improvements to cancel a seven-figure managed services contract. The CISO wanted consistent quality. The SOC manager wanted efficiency. Legion delivered both.

The manager became the hero of the story because he did not ask for more people. He made better use of the ones he already had.

Where to Begin If You Want to Reduce These Hidden Costs

You do not need a complete transformation plan to get started. Most SOCs can begin reducing waste immediately by focusing on a few straightforward steps.

1. Identify high-frequency workflows: Look for anything repetitive, especially tasks that happen dozens of times per day.

2. Ask analysts to document their steps: This becomes the foundation for automation and reveals inconsistencies. We do this at Legion through a simple recording process.

3. Build automation for the repetitive use cases: Let analysts automate on their own without developers. This creates speed and value for repetitive work.

4. Track real metrics: MTTI/MTTR, MTTA (Acknowledgement), onboarding time (a time to value metric), and workflow usage

5. Encourage a culture of sharing: When people share workflows, the entire team improves faster. There are almost always steps that differ between analysts.

Small shifts compound quickly. Capacity increases. Quality rises. Analysts feel more ownership and less drain.

The SOC of the Future Makes Better Use of Human Talent

The SOCs that succeed over the next decade will not be the ones that hire the most people. They will be the ones who make the smartest use of the people they already have.

When you eliminate the hidden costs, you unlock the real value of your team. Human judgment, intuition, and creativity become the focus again. That is the work analysts want to do. And it is the work that actually strengthens your defenses.

Most SOCs are not struggling with a talent shortage. They are struggling with talent waste. Learn how Legion is helping enterprises solve the SOC talent management crisis.