Basics & Security Analysis of AI Protocols: MCP, A2A, and AP2

Explore the security analysis of AI protocols shaping the future of AI. MCP, A2A, and AP2 form the backbone of agentic systems but without strong safeguards, these protocols could expose the next generation of AI infrastructure to serious security risks.

The AI industry is heading into an agent-driven future, and three protocols are emerging as the plumbing for AI: Anthropic's Model Context Protocol (MCP), Google's Agent-to-Agent (A2A) protocol, and the newly announced Agent Payments Protocol (AP2). Each is critical for AI infrastructure, but as we've learned repeatedly in cybersecurity, convenience and security rarely come hand in hand.

Having analyzed these protocols from both technical implementation and security perspectives, the picture that emerges is both promising and deeply concerning. We're building the interstate highway system for AI agents, but we're doing it without proper guardrails, traffic controls, or even basic security checkpoints.

The Protocol Trinity: Different Problems, Converging Solutions

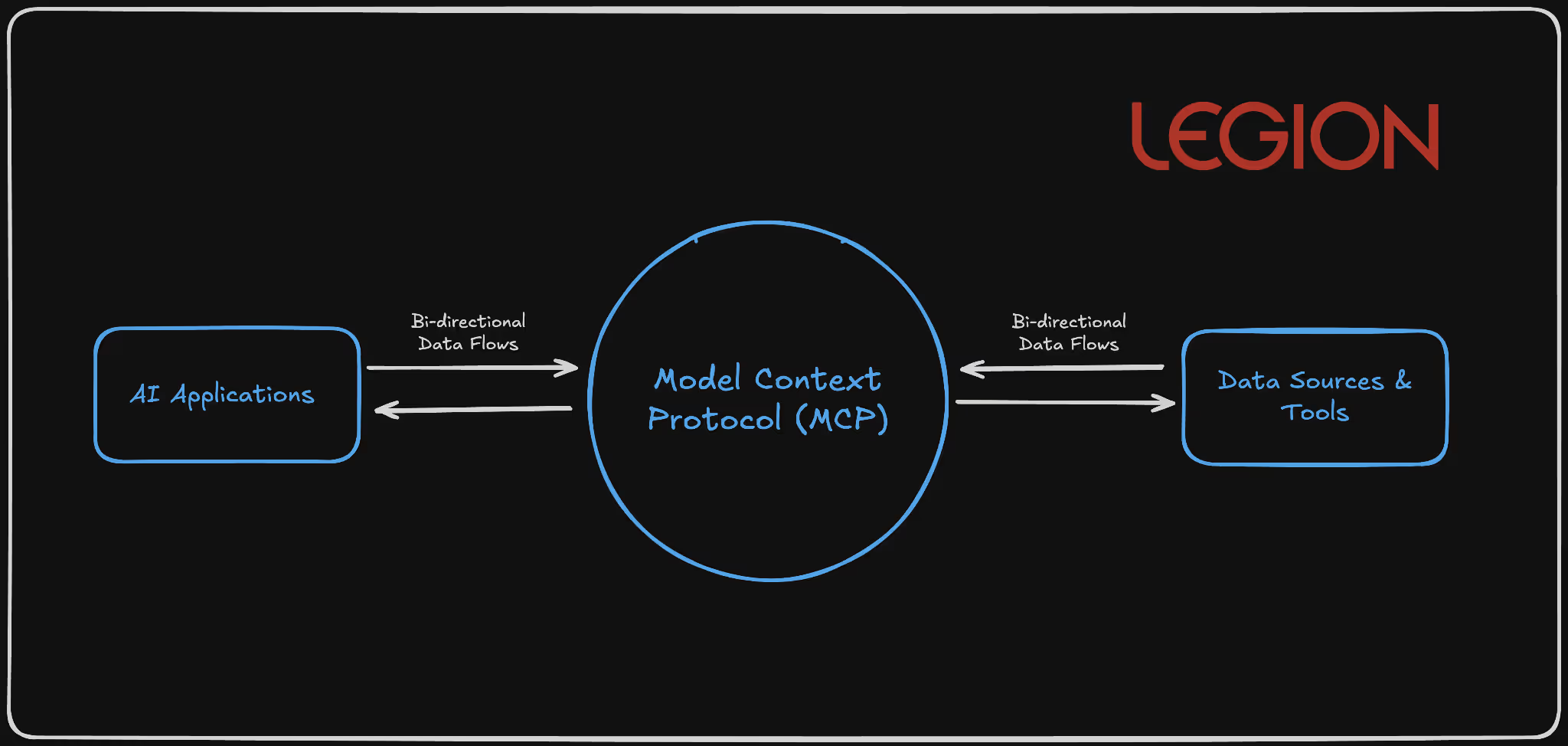

Model Context Protocol (MCP): The Universal Connector

MCP functions as a standardized bridge between AI models and external systems through a client-server architecture. MCP clients (embedded in applications like Claude Desktop, Cursor IDE, or custom applications) communicate with MCP servers that expose specific capabilities through a JSON-RPC-based protocol over stdio, SSE, or WebSocket transports.

In layman’s terms, it is essentially a universal connector that enables AI systems to communicate consistently with other software or databases. Apps use an MCP “client” to send requests to an MCP “server,” which performs specific actions in response.

Visual Representation:

Technical Architecture:

1{

2 "jsonrpc": "2.0",

3 "method": "tools/call",

4 "params": {

5 "name": "database_query",

6 "arguments": {

7 "query": "SELECT * FROM users WHERE department = 'engineering'",

8 "connection": "primary"

9 }

10 },

11 "id": "call_123"

12}

13

Scenario: Automated Threat Investigation and Response

Context: A SOC team wants to speed up the triage of security alerts coming from their SIEM (like Splunk or Chronicle). Instead of analysts manually querying multiple tools, they use MCP as the bridge between their AI assistant and their operational systems.

How MCP Fits In

- MCP Client: The SOC’s AI analyst (say, Legion) is the MCP client. It acts as the interface through which analysts ask questions, such as: “Show me the last 10 failed logins for this user and correlate with firewall traffic.”

- MCP Server: On the backend, the MCP server exposes connectors to SOC systems, for example:

- Splunk or ELK (for log searches)

- CrowdStrike API (for endpoint data)

- Okta API (for authentication events)

- Jira or ServiceNow (for case creation)

- Each connector is defined as a “tool” in the MCP schema (e.g., query_siem, get_endpoint_status, create_ticket).

Workflow Example: AI Analyst (MCP Client) → MCP Server

method: "tools/call"

params:

name: "query_siem"

arguments:

query: "index=auth failed_login user=jsmith | stats count by src_ip"

The MCP server runs the Splunk query, returns results, and the AI can then call another MCP tool:

name: "get_endpoint_status"

arguments:

host: "192.168.1.22"The AI correlates results, summarizes findings, and can automatically open an incident via:

name: "create_ticket"

arguments:

severity: "High"

summary: "Repeated failed logins detected for jsmith"

Security Considerations

- Credential aggregation risk: One compromised MCP client could expose multiple API keys (SIEM, EDR, etc.).

- Schema poisoning: If an attacker injects malicious JSON schema data, it could alter what the AI interprets or requests.

- Mitigation: Use Docker MCP Gateway interceptors and strict per-tool access scopes.

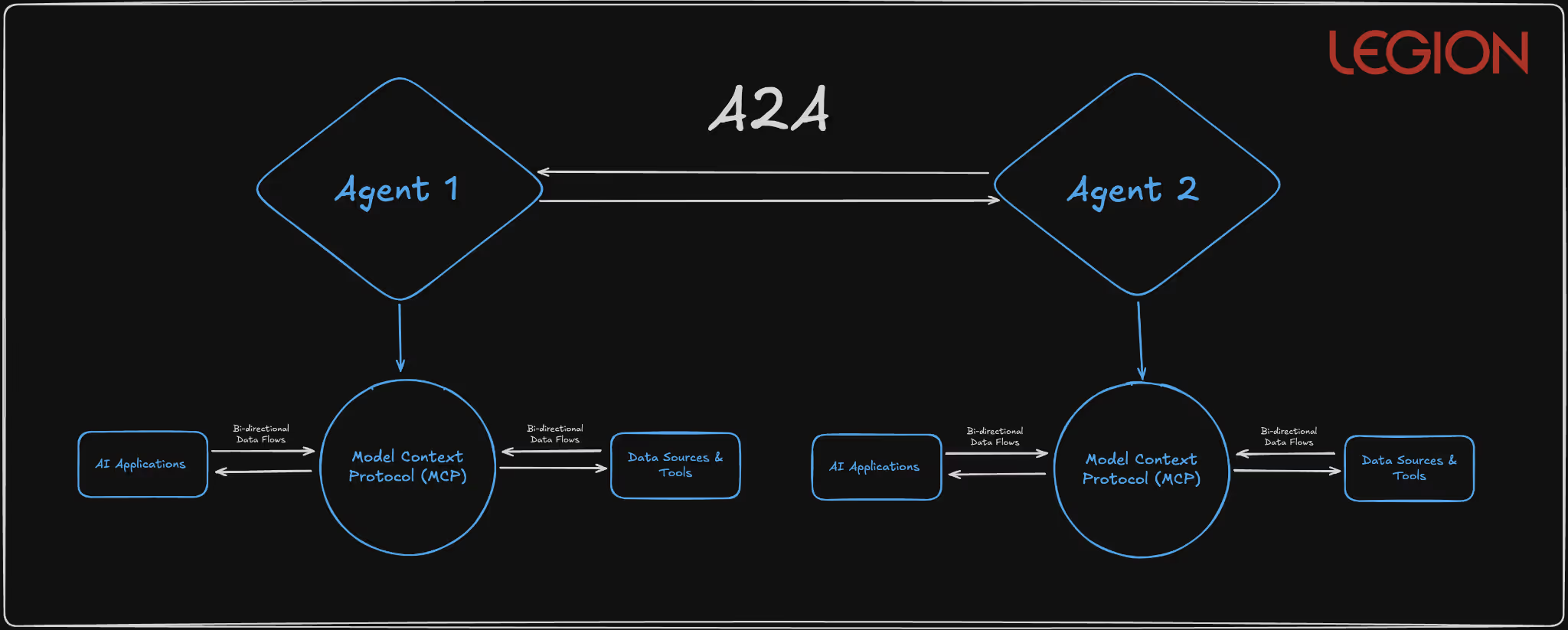

Agent-to-Agent (A2A): The Coordination Protocol

A2A enables autonomous agents to discover and communicate through standardized Agent Cards served over HTTPS and JSON-RPC communication patterns. The protocol supports three communication models: request/response with polling, Server-Sent Events for real-time updates, and push notifications for asynchronous operations.

Basically, A2A lets AI agents automatically find, connect, and collaborate with each other safely and efficiently, no humans in the loop.

Visual Representation:

Technical Protocol Structure:

{

"agent_id": "procurement-agent-v2.1",

"version": "2.1.0",

"skills": [

{

"name": "vendor_evaluation",

"description": "Analyze vendor proposals against procurement criteria",

"parameters": {

"criteria": {"type": "object"},

"proposals": {"type": "array"}

}

}

],

"communication_modes": ["request_response", "sse", "push"],

"security_requirements": {

"authentication": "oauth2",

"encryption": "tls_1.3_minimum"

}

}

Scenario: Automated Incident Collaboration Between Security Agents

Context: Your SOC runs multiple specialized AI agents: one monitors network traffic, another investigates suspicious users, another handles remediation actions (like isolating a device or resetting credentials). A2A provides the common protocol that lets these agents talk to each other directly, securely, automatically, and in real time.

How It Works in Practice

- Agent Discovery via Agent Cards

- Each SOC agent publishes an Agent Card, a digital profile that says:

- “I’m a Threat Detection Agent.”

- “I can analyze network logs and spot anomalies.”

- “Here’s how to contact me securely.”

- The A2A system keeps these cards available over HTTPS, so other agents can find and verify them.

- Each SOC agent publishes an Agent Card, a digital profile that says:

Example:

{

"agent_id": "threat-detector-v2",

"skills": ["network_log_analysis", "malware_pattern_detection"],

"authentication": "oauth2",

"encryption": "tls_1.3"

}

- Agent-to-Agent Workflow

- The Threat Detection Agent flags unusual outbound traffic from a server.

- It sends a message via A2A to the Endpoint Response Agent, saying:

“Investigate host server-22 for potential C2 beacon activity.” - The Endpoint Agent checks EDR data and replies with a summary or alert.

- Simultaneously, it notifies the Incident Coordination Agent to open a ticket in ServiceNow.

- Communication Models in Action

- Request/Response: Threat Detector asks → Endpoint Agent replies.

- Server-Sent Events: Endpoint Agent streams live scan results back.

- Push Notification: Incident Coordinator gets notified once a full report is ready.

Critical Security Concerns

- Agent Card Spoofing: Malicious agents advertising false capabilities through manipulated HTTPS-served metadata

- Capability Hijacking: Compromised agents with inflated skill advertisements capturing disproportionate task assignments

- Communication Channel Attacks: Man-in-the-middle and session hijacking on agent-to-agent communications

- Workflow Injection: Malicious agents inserting unauthorized tasks into legitimate multi-agent workflows

Agent Payments Protocol (AP2): The Commerce Enabler

AP2 extends A2A with cryptographically-signed Verifiable Digital Credentials (VDCs) to enable autonomous financial transactions. The protocol implements a two-stage mandate system using ECDSA signatures and supports multiple payment rails, including traditional card networks, real-time payment systems, and blockchain-based settlements.

Basically, AP2 lets AI agents make trusted, auditable payments automatically without a human typing in a credit card number.

Visual Representation:

Technical Mandate Structure:

{

"intent_mandate": {

"mandate_id": "im_7f8e9d2a1b3c4f5e",

"user_id": "enterprise_user_12345",

"conditions": {

"item_category": "cloud_services",

"max_amount": {"value": 5000, "currency": "USD"},

"vendor_whitelist": ["aws", "gcp", "azure"],

"approval_threshold": {"value": 1000, "requires_human": true}

},

"signature": "304502210089abc...",

"timestamp": "2025-01-15T10:30:00Z",

"expires_at": "2025-01-16T10:30:00Z"

},

"cart_mandate": {

"mandate_id": "cm_8g9h0e3b2c4d5f6g",

"references_intent": "im_7f8e9d2a1b3c4f5e",

"line_items": [

{

"vendor": "aws",

"service": "ec2_reserved_instances",

"amount": {"value": 3500, "currency": "USD"},

"contract_terms": "1_year_reserved"

}

],

"payment_method": "corporate_card_ending_1234",

"signature": "3046022100f4def...",

"execution_timestamp": "2025-01-15T11:45:00Z"

}

}

Scenario: Secure Autonomous Cloud Resource Payments

Context: Your company’s AI agents automatically manage cloud infrastructure — spinning up or shutting down virtual machines based on workload. To do that, they sometimes need to authorize and execute payments (e.g., buying more compute time or storage). AP2 allows those agents to make these payments automatically — but with strong security guardrails.

How It Works

- Step 1 – Intent Mandate (the plan)

- The agent first creates an Intent Mandate describing what it wants to do.

Example: “Purchase $2,000 worth of AWS compute credits for Project Orion.” - This mandate includes:

- Vendor whitelist (AWS only)

- Spending cap ($5,000 max)

- Expiry time (valid for 24 hours)

- Digital signature (ECDSA) proving it came from an authorized agent

- A human or rule engine reviews this intent before any money moves.

- The agent first creates an Intent Mandate describing what it wants to do.

- Step 2 – Cart Mandate (the action)

- Once the intent is approved, the agent generates a Cart Mandate — the actual payment order.

- It references the original intent, ensuring the details match (no one changed the vendor or amount).

- This mandate is also cryptographically signed and executed via a secure payment rail (e.g., corporate card API or blockchain payment).

- Security Enforcement During Payment

- Independent validator checks that:

- The intent and cart match exactly.

- The agent’s digital credential is still valid (hasn’t been revoked).

- The payment doesn’t exceed limits or policy.

- Real-time monitoring watches for anomalies:

- Multiple large payments in short time windows

- Changes to vendor lists

- Repeated failed authorizations

- Independent validator checks that:

- Audit & Traceability

- Every mandate (intent and payment) is stored with its cryptographic proof.

- Auditors can later verify every transaction end-to-end

Security Benefits

Cryptographic Signatures: Ensures that only verified agents can create or authorize payments.

Two-Stage Mandate System: Prevents “prompt injection” or unauthorized payments by requiring two consistent steps (intent → execution).

Vendor Whitelisting & Spending Caps: Limits the blast radius of any compromise.

Cross-Protocol Correlation: AP2 can check MCP/A2A activity logs before allowing a transaction — ensuring payment actions match legitimate workflows.

Immutable Audit Trail: Every payment is traceable, signed, and non-repudiable.

Without these controls, a single compromised AI could:

- Create fake purchase requests (“buy 1000 GPUs from an attacker’s vendor”)

- Manipulate prices between intent and payment

- Execute valid-looking, cryptographically signed frauds

That’s why AP2’s mandate validation and signature chaining are essential. They make it nearly impossible for a rogue or manipulated agent to spend money unchecked.

Architectural Convergence

What's fascinating is how these protocols complement each other in ways that suggest a coordinated vision for agentic infrastructure:

- MCP provides vertical integration (agent-to-tool)

- A2A enables horizontal integration (agent-to-agent)

- AP2 adds transactional capability (agent-to-commerce)

The intended architecture is clear: an AI agent uses MCP to access your calendar and email, A2A to coordinate with specialized booking agents, and AP2 to complete transactions autonomously. It's elegant in theory, but the security implications are staggering.

Implementation Recommendations: Protocol-Specific Security Controls

MCP Security Implementation

Mandatory Tool Validation Framework: Deploy comprehensive MCP server scanning that extends beyond basic description fields:

Static Analysis Requirements:

- Scan all tool metadata (names, types, defaults, enums)

- Source code analysis for dynamic output generation logic

- Linguistic pattern detection for embedded prompts

- Schema structure validation against known-good templates

Runtime Protection with Docker MCP Gateway: Implement Docker's MCP Gateway interceptors for surgical attack prevention:

# Example: Repository isolation interceptor

def github_repository_interceptor(request):

if request.tool == 'github':

session_repo = get_session_repo()

if session_repo and request.repo != session_repo:

raise SecurityError("Cross-repository access blocked")

return request

Continuous Behavior Monitoring: Deploy real-time MCP activity analysis:

- Tool call frequency analysis to detect automated attacks

- Data access pattern monitoring for unusual correlation activities

- Output analysis for prompt injection indicators

- Cross-tool interaction mapping to identify attack chains

A2A Security Architecture

Agent Authentication Infrastructure: Implement certificate-based mutual authentication for all agent communications:

Agent Registration Process:

- Certificate generation with organizational root CA

- Agent Card cryptographic signing with private key

- Capability verification through controlled testing

- Regular certificate rotation (30-day maximum)

Communication Security Controls: Establish secure communication channels with comprehensive auditing:

Required A2A Security Headers:

- X-Agent-ID: Cryptographically verified agent identifier

- X-Capability-Hash: Tamper-evident capability fingerprint

- X-Session-Token: Short-lived session authentication

- X-Audit-ID: Immutable audit trail identifier

Agent Capability Verification System: Never trust advertised capabilities without independent verification:

class AgentCapabilityVerifier:

def verify_agent(self, agent_card):

test_results = self.sandbox_test(agent_card.capabilities)

capability_match = self.validate_capabilities(test_results)

return self.issue_capability_certificate(capability_match)

AP2 Security Implementation

Mandate Validation Infrastructure: Implement independent mandate validation outside AI agent context:

Multi-Stage Validation Process:

- AI-generated Intent Mandate creation

- Independent rule-engine validation of mandate logic

- Human approval workflow for high-value transactions

- Cryptographic signing with organizational keys

- Real-time transaction monitoring against mandate parameters

Payment Transaction Monitoring: Deploy comprehensive payment pattern analysis:

class AP2TransactionMonitor:

def analyze_payment(self, mandate, transaction):

risk_score = self.calculate_risk_score(

user_history=self.get_user_patterns(),

agent_behavior=self.get_agent_patterns(),

transaction_details=transaction,

mandate_consistency=self.validate_mandate(mandate)

)

if risk_score > THRESHOLD:

return self.trigger_additional_verification()

Cross-Protocol Security Integration: Deploy unified monitoring across MCP, A2A, and AP2:

class CrossProtocolSecurityOrchestrator:

def monitor_agent_workflow(self, workflow_id):

mcp_activity = self.monitor_mcp_calls(workflow_id)

a2a_communications = self.monitor_agent_interactions(workflow_id)

ap2_transactions = self.monitor_payment_activity(workflow_id)

# Correlate activities across protocols

risk_assessment = self.correlate_cross_protocol_activity(

mcp_activity, a2a_communications, ap2_transactions

)

if risk_assessment.is_suspicious():

self.trigger_workflow_isolation(workflow_id)

The Broader IAM Implications

These protocols represent a fundamental shift in identity and access management. We're transitioning from human-centric IAM to agent-centric IAM, and our current security models are insufficient for this shift.

Derived Credentials will become essential as agents need to authenticate not just to services, but to each other. AP2's mandate system is an early attempt at this, but we need comprehensive frameworks for agent identity lifecycle management.

Contextual Authorization must replace simple role-based access control. Agents will need fine-grained permissions that adapt to context, user intent, and risk levels.

Audit Trails become exponentially more complex when multiple agents coordinate across multiple systems to complete user requests. We need new forensic capabilities for multi-agent investigations.

Bottom Line: The Infrastructure We Build Today Shapes Tomorrow's Security Landscape

After spending months analyzing these protocols and watching the industry rush toward agentic implementation, I keep coming back to a fundamental truth: we're not just deploying new technologies. We're architecting the nervous system for autonomous digital commerce and operations.

MCP, A2A, and AP2 aren't just convenient APIs or communication standards. They represent the foundational infrastructure that will determine whether the agentic economy becomes a productivity revolution or a security catastrophe. The decisions we make about implementing these protocols today will echo through decades of digital infrastructure.

The security vulnerabilities I've outlined aren't theoretical concerns, but active attack vectors being demonstrated by researchers right now. Tool poisoning attacks against MCP are working in production environments. A2A agent spoofing is trivial to execute. AP2's mandate system can be subverted through the same prompt injection techniques we've known about for years.

Here's what gives me confidence: the collaborative approach emerging around these protocols. When Google open-sources A2A with 60+ industry partners, when Docker develops security interceptors for MCP, when researchers rapidly disclose vulnerabilities and the community responds with patches. This is how robust infrastructure gets built.

The security industry spent years debating when attackers would gain capabilities once out of reach — nation-state-level offensive tooling, zero-day discovery at scale, exploits built and iterated in minutes.

That gap was real. And it gave organizations the impression that the decision about which AI to bring into security operations, and how to do it right, could wait until the picture was clearer.

Mythos ended that assumption.

Not because of the model's size or strength, but because by the time Anthropic announced it, Mythos had already found thousands of high-severity vulnerabilities across every major operating system and browser in use today, without being told where to look. The decision not to release is the signal everyone was looking for.

That changes the implementation question. It was never acceptable to deploy AI badly in the SOC. Now it's not acceptable to deploy it slowly either. The organizations that will come out on top in the next 12 months are the ones that move fast and get it right, and most of the industry is about to discover that those aren't the same thing.

Level set: defenders have always been behind

The average breach lifecycle was already 258 days before AI-assisted attacks became the norm. This has nothing to do with the capabilities of analysts. Human-speed defense against machine-speed offense was always a losing equation.

Mythos-class models will almost certainly expand this breach lifecycle delta.

Most Implementations Are Getting It Wrong

87% of organizations experienced an AI-driven cyberattack in the past year. Security teams know they need AI. Most are already moving. But most implementations are failing for the same reason, and it is not the technology. It is a missing critical datapoint.

You. The context that shapes your business.

Most AI SOC tools treat every organization as interchangeable. They integrate with your SIEM, your EDR, your threat intel platforms, and assume that is enough. It is not. What determines whether AI actually works in your environment has nothing to do with the list of integrations. It is the organizational context that no integration can capture.

How is your organization structured? Where does data actually live versus where it is supposed to live? Who owns what, and how does that map to investigation and response when something goes wrong? How do escalation paths work in practice, not on paper? And critically, how do you enable the business without interrupting it?

The difference shows up clearly in practice. A heavily regulated enterprise running investigations across proprietary internal platforms looks nothing like a technology company. The organizational context that shapes every investigation, every escalation decision, and every response action is invisible to a system that only sees tool outputs.

Closing that gap is the foundational requirement that most implementations skip entirely.

Org Context Is Not a One-Time Setup

This is where most implementations fail, even when they start well.

Organizational context is not a configuration you complete on day one. Your organization is a living thing. Teams change. Tools get added. Processes evolve. New subsidiaries appear. Risk posture shifts with every acquisition, every regulatory update, every new product line the business launches.

An AI system that ingested your context six months ago and stopped learning is already drifting from your reality. It is making decisions based on an organization that no longer exists.

The right model is not a one-off ingestion. It is a continuous learning system that stays embedded in how your organization actually operates, tracks how investigations unfold, incorporates analyst feedback, and updates its understanding as your environment changes.

Not a snapshot.

A persistent model of your specific organization that evolves with it.

What Good Implementation Actually Looks Like

First, AI systems needs to understand how your organization actually operates. Not how it is documented, but how investigations really unfold, where data actually lives, and how decisions get made under pressure. The gap between what is written down and what actually happens is where most AI systems fail.

Second, that understanding cannot be static. Organizations change constantly. New teams, new tools, new processes, new risk priorities. Any system that relies on a snapshot of your environment will drift from reality and degrade over time. The AI working in your environment needs to keep learning it, not just learn it once.

Third, it needs to operate within that context, not around it. Producing technically correct outputs is not enough. The system needs to produce outcomes that are actionable within your organization as it exists today. That means working within your existing workflows, tools, and constraints without asking you to change how you operate to accommodate it.

That is the standard. Systems built around this model behave differently from the start. They do not ask organizations to adapt to them. They adapt to the organization. That distinction is where most implementations succeed or fail, and it is where the industry is slowly converging.

The Only Durable Path

The organizations getting AI right in the SOC aren't the ones with the longest integration lists or the biggest models. They're the ones that treated organizational context as the foundation rather than the afterthought, and built systems that keep learning their environment rather than freezing it in place on day one.

That is a harder implementation. It requires more from the vendor and more from the buyer. But Mythos made the timeline for getting there non-negotiable. The organizations that move fast on the wrong implementation will spend the next year rebuilding. The ones that move slowly on the right one will spend it exposed. The only durable path is moving quickly on the version that actually holds up. Systems built on continuous organizational context, deployed now rather than after the next incident, force the question.

The gap that used to buy time for deliberation is gone. What's left is the quality of the decision you make in its absence.

.png)

Mythos ended the debate on whether AI belongs in the SOC. The new question is how to deploy it right and why organizational context is the foundation most implementations skip.

Anthropic was right (and responsible) to release Mythos first to cybersecurity researchers and a select group of organizations through Project Glasswing. It is a genuinely remarkable model. And the security community should take it seriously. What is available to defenders today will be in the hands of attackers in a few months. That window is closing fast.

Mythos raises the ceiling on what AI can do in cybersecurity tasks. It discovers zero-day vulnerabilities in codebases that previous models could not find. It reverse-engineers complex systems. It constructs sophisticated, multi-path exploits at scale. The capabilities that were previously accessible only to well-funded nation-state actors can now be replicated by a far broader set of threat actors. No longer do you need teams of expert reverse engineers and months of reconnaissance.

The threat landscape is structurally shifting. We will be determined by our ability to shift our defense in kind. Quickly.

Where AI in defense needs to go first

The industry is converging, rightly, on vulnerability research and remediation as the priority. Scanning your own codebase with the same class of models that attackers are using is a clear first step. In many cases, defenders actually have an asymmetric advantage here, as we have better access to our own code than attackers do.

The harder problem is remediation. We already carry significant backlogs of unresolved, sometimes exploitable, vulnerabilities. Unlike an attacker who has nothing to lose, defenders cannot afford mistakes. Our systems are in production. Downtime has real costs. The asymmetry of attacker agility versus defender accountability is where the gap widens.

AI-assisted vulnerability remediation at scale is necessary. But it is not a solved problem, and any honest assessment of the landscape has to acknowledge that.

What this means for security operations

The idea of static detections designed to discover dynamic adversaries is fundamentally misguided. The future is better trip wires and an assume-breach mentality.

For SOC teams, the implications are direct. The scale and complexity of attacks is accelerating. We should expect a higher volume of sophisticated attacks that actively evade detection, that do not conform to known signatures or behavioral patterns, and that are designed from the ground up to stay invisible.

This breaks the model that most SOC programs are built on. The idea of maintaining a library of static detections to catch dynamic adversaries has always had limits. Those limits are now being exposed in real time.

What we need instead is the ability to detect a high volume of low-fidelity signals, such as anomalies in endpoint behavior, data access patterns, email activity, network flows, and identity. This requires teams to investigate each one as if it were the leading edge of a sophisticated breach. Not because every alert is a nation-state intrusion, but rather, we should expect that a higher percentage now may be.

The question is no longer whether to adopt AI in security operations. This is clearly needed. We cannot scale defenses solely on human labor.

The question is how to do it in a way that actually works inside the operational reality that security teams live in.

The real challenge is operational reality

Enterprises have legacy and custom tools, established processes, compliance and audit requirements, escalation paths, and oversight obligations that are not optional. AI cannot simply replace this infrastructure. It has to work within it.

You cannot properly scale your defenses without giving AI access to your organizational context, including your tools, your processes, your detection logic, and your escalation criteria. AI agents need to be able to investigate with the consistency and rigor of an incredible IR analyst, operate transparently, and support human oversight at the points where it matters.

This is precisely what we built Legion to do: meet organizations where they are. Our platform learns your existing tools, processes and context and makes them accessible to the latest frontier models (now Mythos, and every model in the future). From that we create structured, repeatable workflows where consistency is required or fully agentic investigations that require depth and judgment. Every action is auditable. Human-in-the-loop controls are configurable. And the system integrates across your entire stack.

My conclusion - Assume breach, investigate everything, build for the attacker that has already found the vulnerability you have not patched yet, and is using Mythos-level models to stay ahead of your detections.

In the wake of Mythos and Project Glasswing, security operations teams need AI that meets them where they are.

Picture a senior analyst mid-investigation. Eight browser tabs open across CrowdStrike, VirusTotal, Defender, and Microsoft Entra. She's running a hunting query in one window, checking an IP reputation score in another. And somewhere in between, she's documenting. Taking screenshots, copying log entries into a case note, capturing context before it slips away.

This is the job. Investigations today aren't just about finding the threat. They're about moving across tools, pulling together evidence from a dozen different sources, and building a record that another analyst, or an auditor, or a manager, can actually follow. The documentation isn't a distraction from the work. It is part of the work.

Everyone in security has lived that.

Which raises a question that's been easy to ignore until now: if we wouldn't accept an analyst who said "trust me, I looked at it"- why are we accepting that from AI agents?

Evidence Has Always Been the Standard

The reason SOC analysts document isn't distrust. It's precision. A good investigation has always meant showing your work. The summary an analyst writes is their claim, the insight they've drawn from what they saw. The screenshot is the fact. Undisputable evidence, captured at the moment of discovery. Together they tell the full story: here is what I found, and here is the proof.

.png)

Evidence gathering has always been a core part of the job. Screenshots and logs aren't bureaucratic overhead. They're how you distinguish signal from noise, how you close out audit findings, how you hand off a case without losing context.

You Wouldn't Accept "Trust Me" From an Analyst. Stop Accepting It From AI

We hold human analysts to a clear standard. When an analyst closes a case, we expect to see their work. The exact screen they reviewed, the exact query they ran, the exact result that informed their decision. A summary of what they found is a claim. The screenshot is the proof.

We should hold AI agents to the same standard.

Today, most AI SOC give you a verdict and a reason. The agent processed the alert, evaluated the indicators, and concluded it was malicious. But if you ask what it actually saw, you're directed to API logs and structured JSON responses. That's not evidence. That's a reconstruction built after the fact, from data that was never meant to be read by a human auditor in the first place.

The gap between what an AI agent did and what you can actually verify is where hallucination risk lives. A summary can sound confident and still be wrong. Without visual evidence captured at the moment of the decision, you have no way to know what the system actually encountered.

Legion operates differently. Instead of calling APIs, Legion navigates your source systems directly through the browser, the same way a human analyst would. It opens the actual system, reads the actual screen, and captures a screenshot of exactly what it sees at every step. The summary is the claim. The screenshot is the fact.

That's the standard we believe AI investigations should meet. And it's the only architecture that meets it.

How Legion Automates Evidence Gathering

Legion Evidence Gathering captures visual proof of every action Legion takes as it navigates your source systems, automatically, in real time.

.png)

Take a malware investigation spanning CrowdStrike, VirusTotal, and Defender. Legion opens the originating ticket, reads the case, and begins investigating. As it moves through each tool, it takes a screenshot at every step. The CrowdStrike detection page as it appeared. The VirusTotal result in context. The Defender hunting query and its output. Every interface, exactly as Legion saw it.

By the time an analyst opens the case, the full evidence gallery is already there. Screenshots organized sequentially, labeled by tool, timestamped, and ready to review. Not just a summary. Not just a log. The complete picture: the analysis and the visual evidence behind every conclusion.

And it stays there. Every investigation Legion runs is stored and searchable. When an auditor asks a question, when a peer analyst picks up a handoff, when someone needs to understand why a decision was made, you go back to the session and everything is right there. Every step. Every screen. Nothing reconstructed. Nothing missing.

Different alert types. Different toolchains. The same complete evidence gallery, every time.

This Is What Accountable AI Looks Like

We've always known what a good investigation looks like. You show your work. You back your conclusions with evidence. You leave a record that someone else can follow. Legion applies that same standard to every automated investigation it runs, without exception and without manual effort. The bar doesn't move because the analyst is an AI. It stays exactly where it's always been.

See Legion Evidence Gathering in action. Request a Demo

.png)

Legion automates evidence gathering during AI-driven investigations, capturing screenshots from live security tools at every step, so every conclusion is backed by visual proof.